by Martin Laukkanen | Oct 17, 2016 | Reporting

Microsoft PowerBI is one of the best new BI app’s from Microsoft since SSRS (which still doesnt work in the cloud?!), however there is no out-of-the-box web parts available for Office365 or Project Online users unless of course you’re happy to publish your reports unauthenticated to the whole world by using the PowerBI embed option!

There is an add-in available (Power BI Tiles for SharePoint) on the SharePoint app store but if you just want a quick PowerBI report displayed I couldn’t find anything out there, so after reading about a great example of creating your own Provider hosted app in the following blog; Part 1: How to embed PowerBI in a SharePoint site, I thought that there must be an easier way.

Content Editor Web Part and a little JavaScript

Note: this solution only applies to Office 365 and Project Online, sorry on-prem people..

While it would be very easy to embed a ‘Shared’ report into an iFrame in a content editor web part (CEWP), that requires the report to be accessible anonymously which is out of the question in just about any Corporate instance. So the big challenge with displaying a PowerBI report is that you must first authenticate the current user to Microsoft’s servers, this is were ADAL.js comes in, Microsoft’s Azure AD JavaScript library makes this all possible.

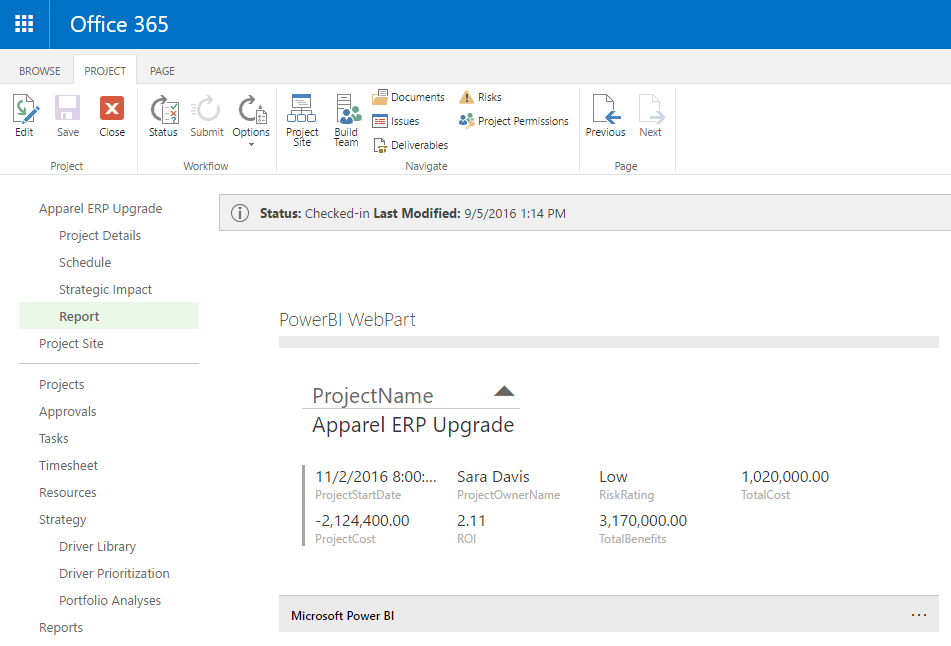

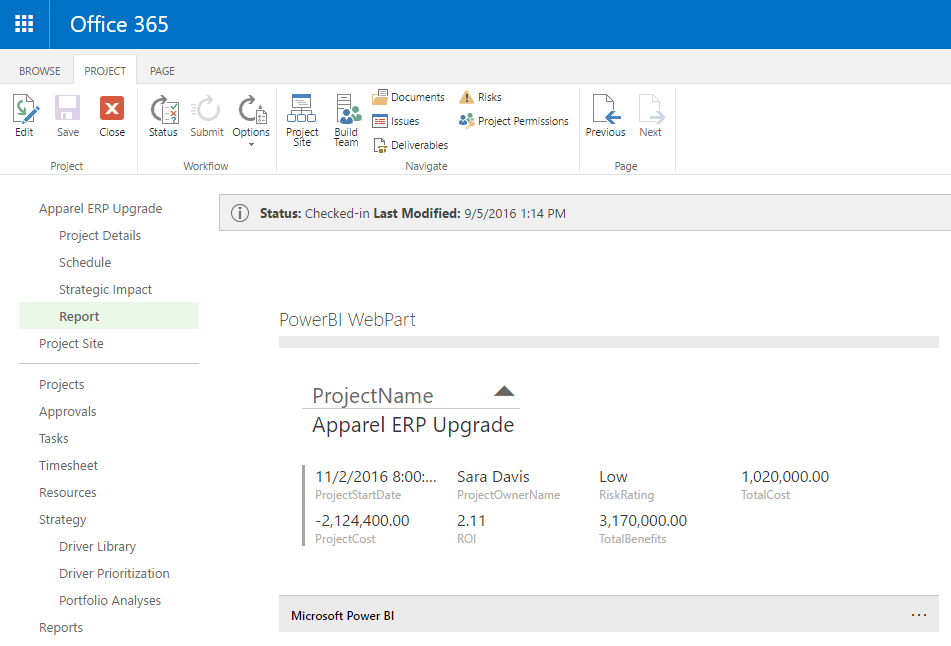

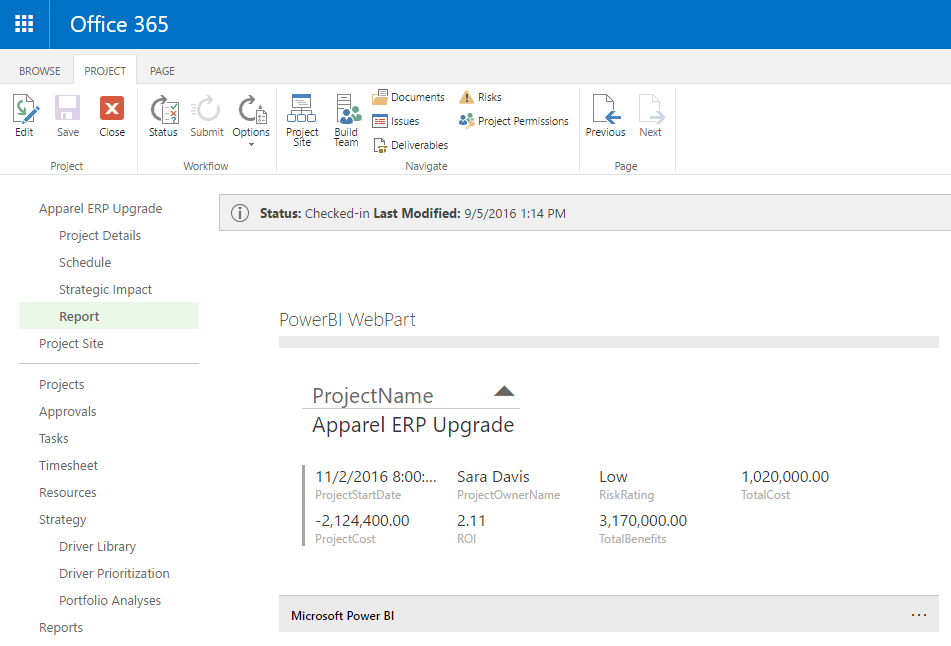

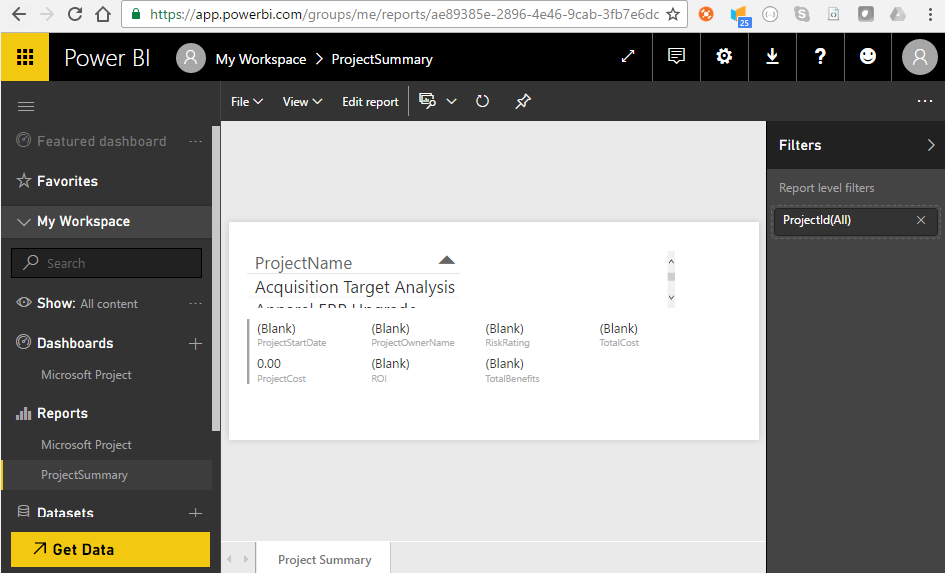

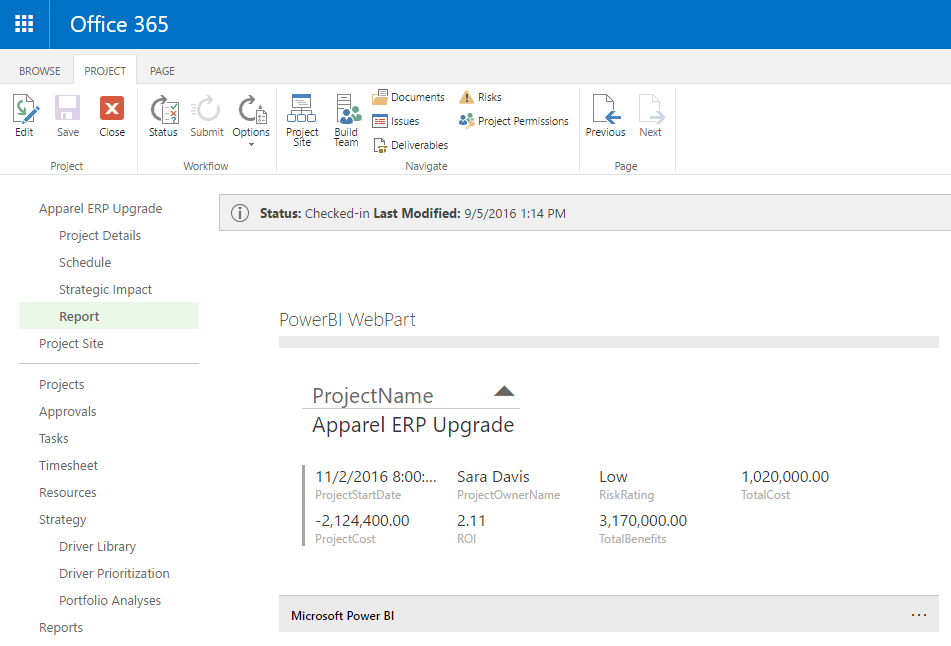

Screenshot of the finished product:

Overview of the Script

The script while short (scroll down for a sneak peak), does a lot!

- It will authenticate the user with Microsoft – normally this will take the users current logged in session so you won’t see the prompt

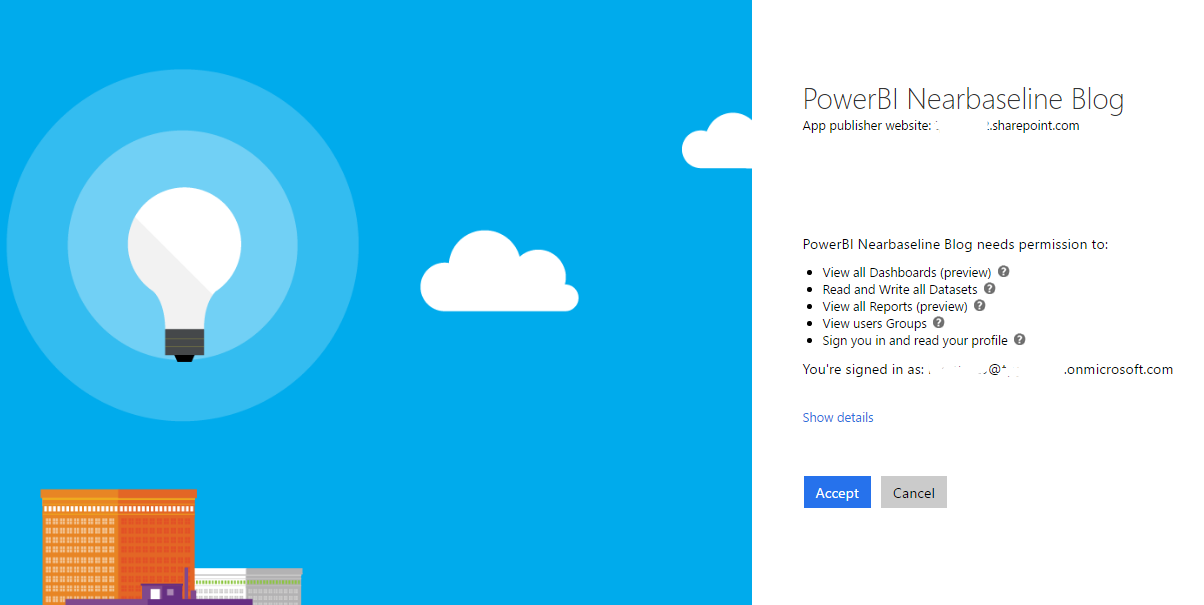

- On first load it will request permission to access PowerBI – this step only happens once per user and covers all web parts / reports configured

- Once it has a login token it will cache it for future requests (it’s valid for a few hours/days I think?)

- Now finally using the security token it will load a specific report in a iFrame displayed in a CEWP on the page

Additionally using an oDataFilter you can pass parameters like ProjectUid or similar to the report.

Requirements

This solution requires the following:

- Office 365 / Project Online tenant

- An Azure AD application- but we don’t need anything fancy like dirsync setup (And note that every O365 tenant today has an Azure AD directory automatically created so there is no additional cost here)

- All users who will view the web part require at least the PowerBI free license in O365

- To setup this you’ll need permissions to configure Azure AD, so probably global admin in Office 365.

Configuration Steps

First up you’ll need to create an Azure AD Application in your tenant to allow permissions to view PowerBI reports for your users, to do so go here (don’t panic this is not a full blow SharePoint app we’re creating, it’s just a simple xml manifest that defines which permissions this script will be allowed!):

1. Register the Azure AD Application

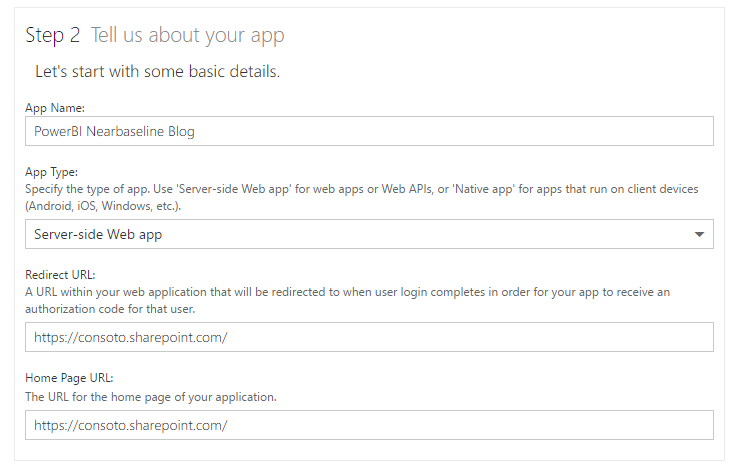

Register an Application for Power BI – https://dev.powerbi.com/apps

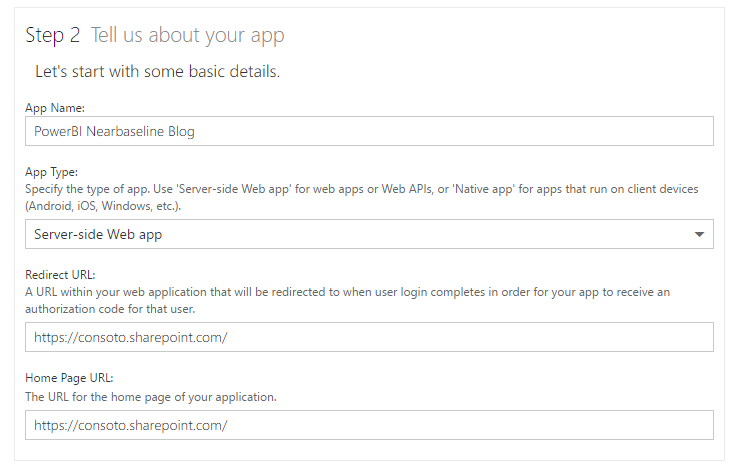

Configure the following settings:

The most important setting is the Redirect URL, that must match your Office365 tenant name, and it could be a subsite but ADAL will allow any site below this URL to use the script.

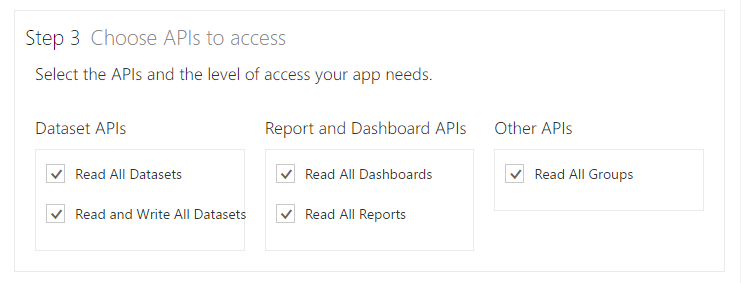

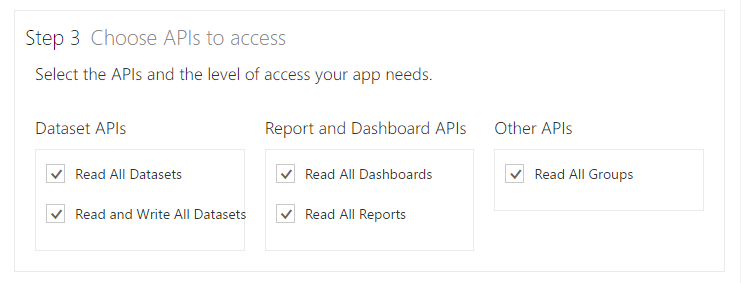

Then make sure to pick the appropriate permissions, I’m going to go with everything for this example.

Finally click Register App, and it will create a Client ID (a GUID), save this we’ll need it later.

2. Update the Application registration to include Implicit Flow

Unfortunately as nice as that MS web site in the previous step was, it configures only specific types of apps, one that relies on a secret key in addition to the client Id. This is not suitable for a JavaScript app as that would mean the secret key is in plain text in the script! What we need is to enable “implicit flow”, in short this means that only short lived specific access tokens can be issued by this app (ie the kind you get when you logon to O365) negating the need for the secret code.

However we now need to edit this app to enable this functionality, if you skip this step then when you try to use the script, you’ll see the following error:

ADAL error occurred: AADSTS70005: response_type 'token' is not enabled for the application

Trace ID: ebe80ce0-8365-4f89-b2ee-d10218ffa595

Correlation ID: 491b009c-907b-4c8d-8605-efa34347ff47

So before you go further you’ll need to update the application manifest in Azure AD.

Firstly open https://portal.azure.com and login with your Office365 admin user credentials (note that using the old Management Portal will require a subscription, but you don’t now using the new portal if all you’re doing is editing Azure AD).

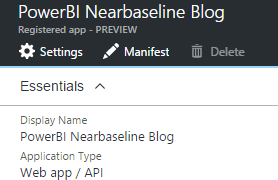

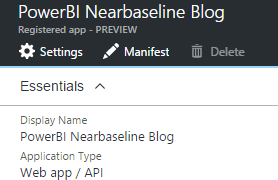

Now from the left menu select Azure Active Directory, then the default directory details should load, if not you’ll need to select the appropriate directory configured by your Admin. Click on App Registrations, then click on the app with the name you created in the previous step.

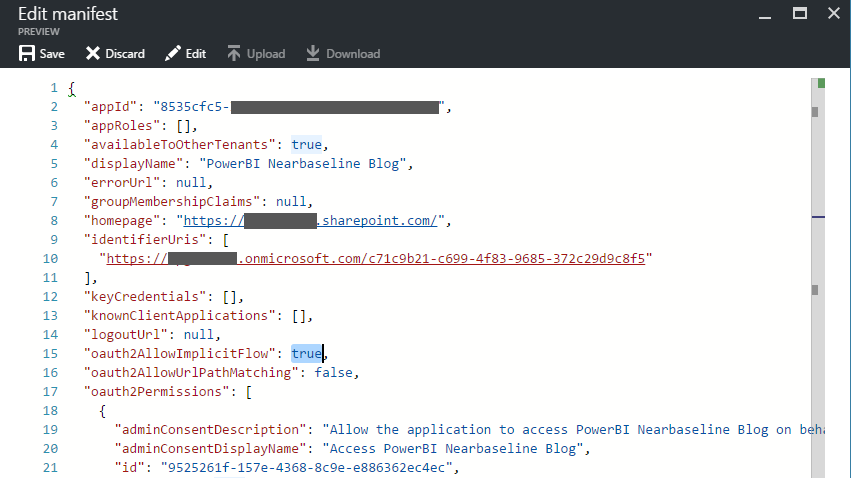

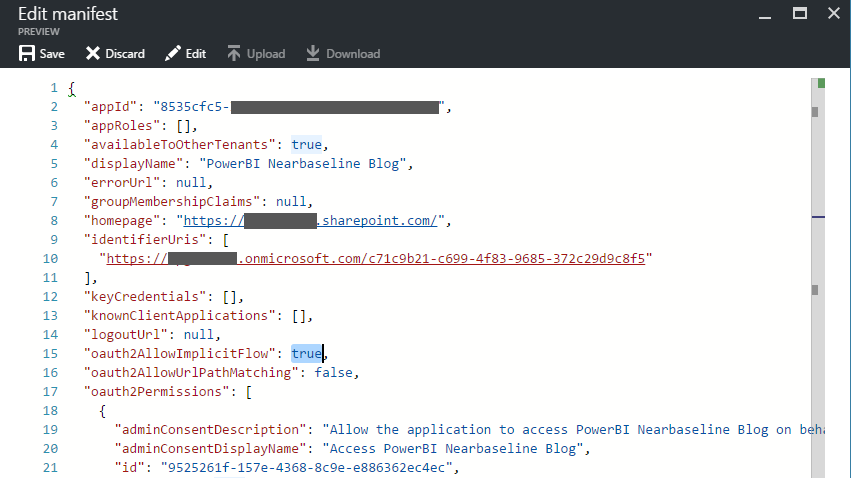

Now you can edit the Manifest from the top menu, click Manifest and you’ll need to just make one change:

By default the option oauth2AllowImplicitFlow (line 15 above) is set to false, change that to true by editing the value, then save the changes.

Okay, that was the hard bit.

Update Oct 2017: You will need to update your ReplyUrl in the manifest to include a wildcard on the end, that will be required if this webpart is installed on a PDP which has a URL: https://siteurl/PWA/Project%20Detail%20Pages/pdpname.asp?ProjUid=1324132412124 or similar. Obviously the ProjUid changes for each project, so a reply url of something like: ‘https://contoso.sharepoint.com/*’ or more specifically (for better security) ‘https://contoso.sharepoint.com/sites/pwa/Project%20Detail%20Pages/*’.

3. Publish your PowerBI report and get the Guid

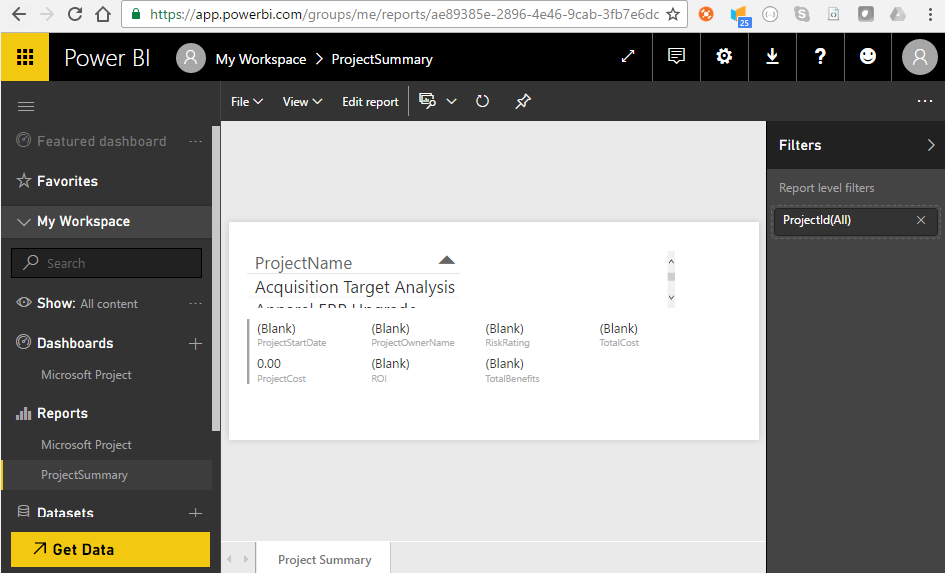

The script I’ve put together is pretty simple, it just authenticates the user then loads a report from PowerBI using the Guid of the report and passing a few other configuration options to suit the requirements (ie display a simple report with minimal UI). So for this you’ll need a report published to PowerBI under your workspace, I’ve just created a simple Project Status report that shows some details and takes a ProjectId parameter:

Once published to PowerBI you can see the Guid of the report in the browser URL bar, in this case: ae89385e-2896-4e46-9cab-3fb7e6dd16c4

Otherwise you can see the report level filter configured; “ProjectId“, we’re going to use that in the script as well as we’ll be adding the webpart to a Project Detail Page (web part page).

4. Configure the script

So here’s the script:

<script src="https://ajax.googleapis.com/ajax/libs/jquery/1.11.1/jquery.min.js"></script>

<script src="https://secure.aadcdn.microsoftonline-p.com/lib/1.0.0/js/adal.min.js"></script>

<script type="text/javascript">

(function() {

/// PowerBI SharePoint WebPart

/// By Martin Laukkanen - TPG The Project Group

/// Including some of http://paulryan.com.au/2015/unified-api-adal/

/// v0.2.20161016

"use strict";

var subscriptionId = '[mycompany].onmicrosoft.com';

// Copy the client ID of your AAD app here once you have registered one, configured the required permissions, and

// allowed implicit flow https://msdn.microsoft.com/en-us/office/office365/howto/get-started-with-office-365-unified-api

var clientId = '00000000-0000-0000-0000-000000000000';

var config = {

subscriptionId: subscriptionId,

clientId: clientId,

postLogoutRedirectUri: window.location.origin,

resource: 'https://analysis.windows.net/powerbi/api',

prompt: 'none',

cacheLocation: 'localStorage', // enable this for IE, as sessionStorage does not work for localhost.

embedUrlBase: 'https://app.powerbi.com/reportEmbed',

reportUid: 'ae89385e-2896-4e46-9cab-3fb7e6dd16c4',

oDataFilter: "ProjectData/ProjectId eq '" + window.PDP_projUid + "'"

};

var authContext = new AuthenticationContext(config);

// Check For & Handle Redirect From AAD After Login

var isCallback = authContext.isCallback(window.location.hash);

authContext.handleWindowCallback();

if (isCallback && !authContext.getLoginError()) {

window.location = authContext._getItem(authContext.CONSTANTS.STORAGE.LOGIN_REQUEST);

}

// If not logged in force login

var user = authContext.getCachedUser();

if (!user) {

authContext.login();

}

// Acquire token for resource.

authContext.acquireToken(config.resource, function(error, token) {

// Handle ADAL Errors.

if (error || !token) {

console.log('ADAL error occurred: ' + error);

return;

}

// Store our token

config.authToken = token;

// Update the report once the page has loaded

$(document).ready(function() {

// Update the iFrame

var iframe = $('#powerBiframe');

var url = config.embedUrlBase + '?filterPaneEnabled=false&navContentPaneEnabled=false';

iframe.on('load', postActionLoadReport);

iframe.attr('src', url);

// Post the access token to the IFrame

function postActionLoadReport() {

var iframe = document.getElementById('powerBiframe');

var computedStyle = window.getComputedStyle(iframe);

// Construct the push message structure

// this structure also supports setting the reportId, groupId, height, and width.

// when using a report in a group, you must provide the groupId on the iFrame SRC

var m = {

action: "loadReport",

reportId: config.reportUid,

accessToken: config.authToken,

oDataFilter: config.oDataFilter,

width: computedStyle.width,

height: computedStyle.height

};

var message = JSON.stringify(m);

// push the message.

iframe.contentWindow.postMessage(message, "*");;

}

});

});

})();

</script>

<iframe id="powerBiframe" width="660" src="" height="296" frameborder="0"></iframe>

Note: The script is a .html file, this saves having to have two separate files. Save this file as “PowerBiWebPart.html”.

The script is based on a great ADAL.js example from: http://paulryan.com.au/2015/unified-api-adal/, I’ve just taken the authentication part and then added the PowerBI iFrame components.

What you’ll need to update are the following lines:

- Line 11: Replace [mycompany] with your O365 tenant name

- Line 14: Paste your client id for the PowerBi Azure app registered in step 1 here.

- Line 24: Update the reportUid with your report’s Guid from the last step.

- Line 25: Update the oData parameters as required for your report. You can see I’m using window.PDP_projUid which is a globally declared variable by Project Online that is available in all PDPs to provide the current project’s Guid.

- Line 86: Set the iFrame width and height desired to fit the report in the web part. For the height add 56 px to fit in the PowerBI bar at the bottom.

I won’t go through the whole script in detail, read the comments if you want to know what’s happening, however I will note that I have added the following query strings to the eventual URL of the report (line 57):

‘?filterPaneEnabled=false&navContentPaneEnabled=false’

They will hide both the filter pane from the right and the report page view from the bottom. (if anyone knows the query string to hide the PowerBI bar at the bottom please post in the comments!)

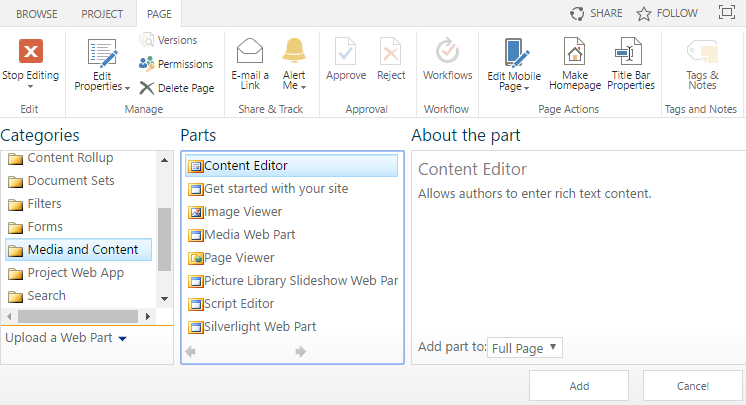

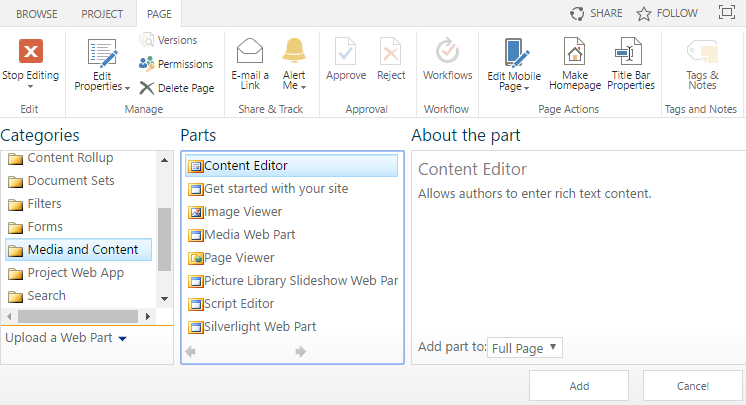

5. Add the Script to a Content Editor Web Part

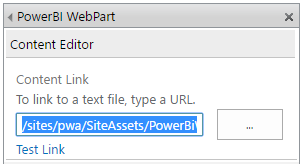

Last steps: upload this script somewhere into your SharePoint site collection, e.g. /sites/pwa/SiteAssets and keep a note of the URL.

Edit the web part page / project detail page that you want to add this to, and add the Content Editor Web Part:

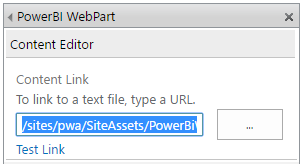

Next edit the web part properties and update the URL to point to your script, for example; /sites/pwa/SiteAssets/PowerBiWebPart.html

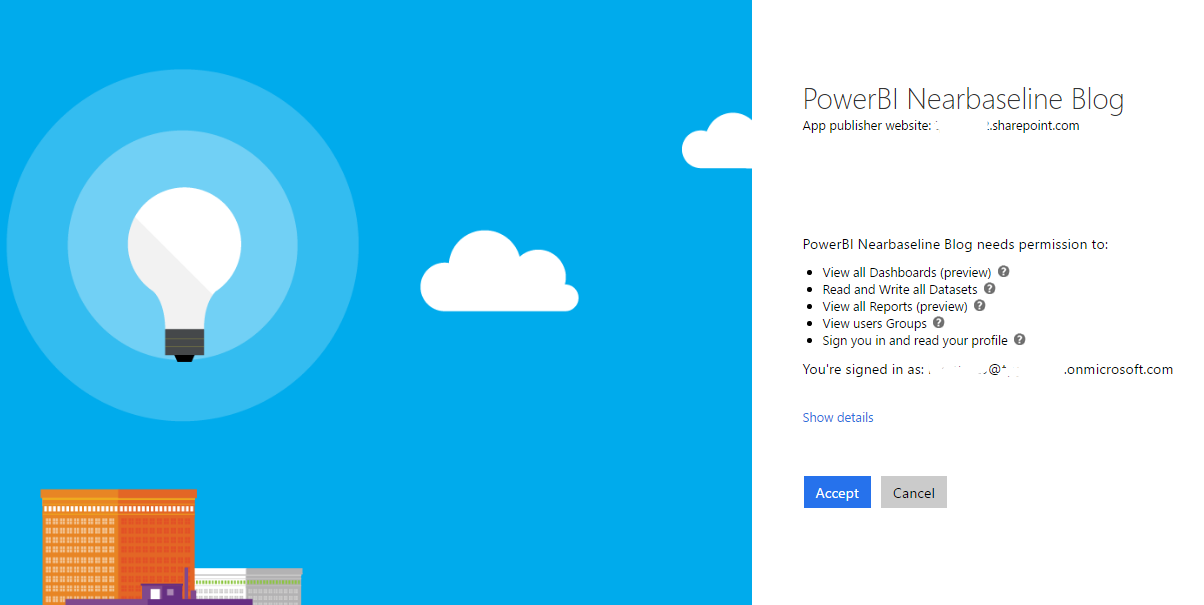

You can leave the rest of the web part settings as defaults, now save the page and stop editing, on refresh you should see this:

The script has just taken over the browser to authenticate you and now the Application permissions need to be approved by the user. You should not have seen the normal logon prompt as the in the script on line 21 we have configured “prompt”: “none”, but every user will still see the above consent form.

Note: I haven’t tested “admin_consent” prompt setting which in theory should allow the admin to consent on behalf of all users, I’ll update this when I get a chance to try that out

Once you Accept then you should be immediately redirected back to the page you were in:

Neat.

As you can see that PDP is open on a project called “Apparel ERP Upgrade” and the report filter has been applied successfully!

Final Words

The user experience in these OAuth2 authentication with consent flows can be a bit weird, even more-so in this case where it is a web part in a page that is causing a full page redirect (and return). Not much can be done about that as OAuth2 requires this flow to be 100% in the control of the authentication provider, ie Azure AD here, therefore it is not possible to hide this in a frame or popup window or anything like that.

Fortunately once done the token is cached for subsequent requests and so only once per day or so (longer I think???) will the authentication redirect happen. Additionally after the initial user consent is granted the authentication will automatically return without further prompting, it just might look a bit weird on a slow connection though.

Finally, if you want to display multiple reports on one page, don’t add the webpart to the page more than once, I found that the authentication gets very confused when the token is not in the cache. Instead update the script to load multiple iFrames or just load a dashboard instead.

Enjoy! And please leave a comment below if you find this useful!

by Martin Laukkanen | Nov 25, 2014 | Apps, Reporting

Project Server 2013 changed a lot when it comes to both security and reporting and I’ve had a few customers asking about configuring user access to OData both for reporting and apps (such as Bulk Edit), fortunately Microsoft has some good guidance available on MSDN covering this topic;

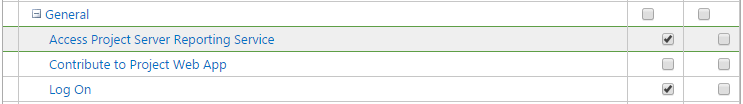

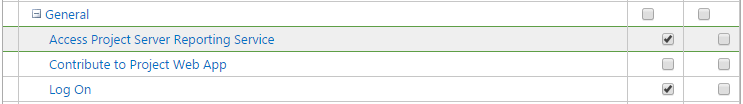

When Project Server 2013 or Project Online is in Project permission mode, you can explicitly grant or deny access to the OData feed for specified Project Web App users. For example, on the Edit User page in Project Web App, expand the Global Permissions section, and then in the General section, select the Access Project Server Reporting Service check box in the Allow column.

In the default SharePoint permission mode, not all Project Web App users have access to the OData feed. Only users in the following groups have access: Portfolio Viewers, Portfolio Managers, and Administrators

Source: https://msdn.microsoft.com/en-us/library/office/jj163015.aspx

So basically if using Project Permission mode then this is what you’re after (preferably from Edit Group, not edit user as MSDN suggests):

Unfortunately as above article goes on to mention there is no granularity to the access, it is all or nothing so if you’re looking for the kind of flexibility that the cubes previously gave then unfortunately you’re out of luck!

What has this got to do with Apps?

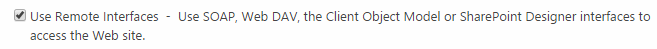

OData and the REST Api’s used in CSOM / JSOM are closely related, and in the case of Project Apps that need to work with project data such as Bulk Edit, then we need to also grant access to “Use Remote Interfaces” in SharePoint, to do that you will need to check the Permission Levels configuration: Site Settings -> Site Permissions -> Permission Levels -> Edit

By default the Project Managers SharePoint Group has this already, so if for example you want to grant all Project Managers in PWA permissions to run Bulk Edit, then all you need to do is add the Project Server Global reporting permission above, and combined with the SharePoint permissions they will be able to edit all project information.

What if I want Granularity?

I’m not good a saying “no it can’t be done” :) so if you require reporting granularity then the solution would be to use the SSIS reporting to create your own local SQL replica where your DBAs can apply whatever permissions are required. Reason 999 why that SSIS solution is key to any deployment in the future!

How about Apps? The picture is a little better but at the same time less simple, REST is security trimmed, so a PM will by default only be able to update / modify the project to which he / she has permissions, great. However as Apps can leverage both OData and REST endpoints which work differently it may result in inconsistencies depending on how the App was designed, for example:

Bulk Edit (at the time of writing) will allow Read Access to all Projects as a minimum, BUT will only allow updates to projects that to which the user has the rights.

If you’re wondering about any other app, then these simple rules apply:

- Writing data always requires REST – allows security trimmed granularity.

- Reading sometimes requires OData – does not allow granularity.

I expect in the future this to change, from experience project server security has been a moving target since the very beginning, so perhaps we’ll see more granularity in the future.

Hope that helps someone out there..

by Martin Laukkanen | Sep 5, 2014 | Project 2013, Project Online, Reporting

I came across this while working with a customer and quickly found that the error triggers a known issue in my Bulk Edit app as well which was causing some unexpected errors using the app for some people, however this is a broader issue as it causes basic OData feeds to fail completely with the following message: Error message accessing /_api/ProjectData/Projects

<?xml version="1.0" encoding="UTF-8"?>

<m:error xmlns:m="http://schemas.microsoft.com/ado/2007/08/dataservices/metadata">

<m:code/>

<m:message xml:lang="en-US">An error occurred while processing this request.</m:message>

</m:error>

The problem can happen both on-prem or online but if you have access to the ULS logs you will see the following log entries (showing only the interesting bits):

Project Server Database agxfb Exception [bucketHash:7C60C52B] SqlException occurred in DAL (ProjectWebApp@NB): Class 16, state 1, line 1, number 8156, procedure , error code -2146232060, exception: System.Data.SqlClient.SqlException (0x80131904): The column ‘Campaignstatus’ was specified multiple times for ‘OdataSelect’. Project Server OData abljj High [Forced due to logging gap, Original Level: Verbose] PWA:http://nb/PWA, ServiceApp:Project Server Service Application, User:i:0#.w|blah\ml, PSI: Exception encountered when trying to instantiante the OdataResultItemCollection

As error logs go that is a pretty helpful one!

Cause – Duplicate Custom Fields

The issue is caused by the Enterprise Custom Field configuration of the Project Server, specifically to looks like there are some duplicate named fields (Campaignstatus in my error above), now of course that is of course not possible! However when you look at the OData feed you can see that a few things happen:

- Spaces are removed from field names; so e.g. Campaign Status becomes CampaignStatus, etc.

- Duplicate named fields (after space removal) are numbered, so for example you may see CampaignStatus1.

However there is a bug, OData is case-sensitive while SQL is not. So in my example above it seems that there are two fields the same name but with different cases, specifically I have the following two fields configured: Campaign Status and Campaignstatus. In my case if the second field was named CampaignStatus then this issue would not occur as it would have a number appended in the feed! (While this might sound a little esoteric actually I’ve already seen this a couple of times in German PWA instances where words are typically conjugated, etc)

Solution

Simple, either;

- Rename the aforementioned field to something completely different (e.g.; Campaignstatus2), or

- Recreate the field with different Case, e.g. in my example if I recreated the field Campaignstatus as CampaignStatus.

Note; renaming a field to a different case does not work(!), you need to recreate the field in order to regenerate the correct ‘cased’ Odata name.

Hopefully this bug will be fixed in a future service pack, but for now in Bulk Edit at least I have implemented a workaround anyway.

by Martin Laukkanen | Jul 25, 2014 | Reporting, SQL

I saw this issue when working with an old colleague Hammad Arif earlier this year and he came up with a solution written up here that worked nicely at the time, however I’ve come across this again and this time it proved more of a challenge.

Problem

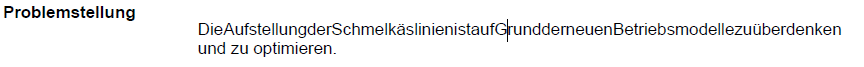

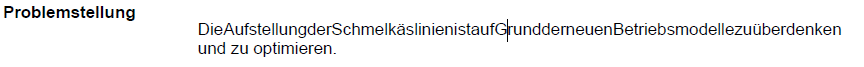

When exporting to PDF Reporting Services reports containing multi-line text from a SQL datasource the first line of text loses all spaces between words, see screenshot taken from a PDF file:

Nope that’s not one particularly long German conjugation, that is the problem I’m talking about! It only seems to affect the first line of each multi-line based custom field value in the SSRS dataset and additionally when looking at the data in SQL or in the HTML source there is nothing different visible! However oddly on the affected projects a single odd character (​) does exist before the html <span>.

Resolution

After much head scratching it took a call to Microsoft to identify that hidden away in the database tables are some characters, specifically HEX ‘0B 20’ which turns out to be the Zero Width Space or in HTML (​), the same character you can see in the HTML. Now this makes sense as despite these being invisible in the SQL tables using a SELECT statement, they still exist and cause these symptoms in the PDF but not when viewed on the Web or exported to Word or other formats.

Fortunately the resolution for the report is nice and simple;

SELECT REPLACE(Problemstellung, NCHAR(8203), '') AS Problemstellung

Simply wrap each multi-line text field affected with the SQL REPLACE command removing the character completely!

To answer the question of where they came from, that is one I leave to the Microsoft escalation engineers. :)

HTH,

by Martin Laukkanen | Apr 9, 2014 | How to, Reporting

I’ve been looking forward to using the new SSIS OData Connector for SQL 2012 since first hearing about it at Project Conf last month, Paul Mather wrote up a great step-by-step guide on getting it all up and running here so have a look at that if you haven’t yet.

However the devil is always in the details! Creating a simple report combining Project data and SharePoint site data is not as straight-forward as you might hope.

Reporting custom project site list data from ProjectOnline

Possibly the most common customer request I get when it comes to reporting is the need to report against non-standard Project site list data, something that was simple using SSRS 2008 R2 but made impossible in ProjectOnline, until now that is.

Following on from Paul’s post, in order to do this you’ll need an SSIS package that does the following:

- Retrieve Project Data from the ProjectOnline OData endpoint: /_api/ProjectData

- Get a list of Project Site URLs from the data and save it in a variable

- Loop through each Project Site and retrieve data from the SharePoint endpoint: /[ProjectURL]/_vti_bin/listdata.svc after dynamically updating the [ProjectURL]

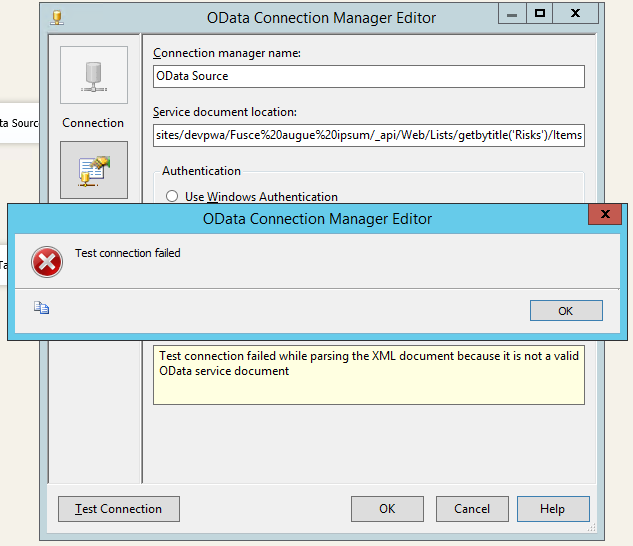

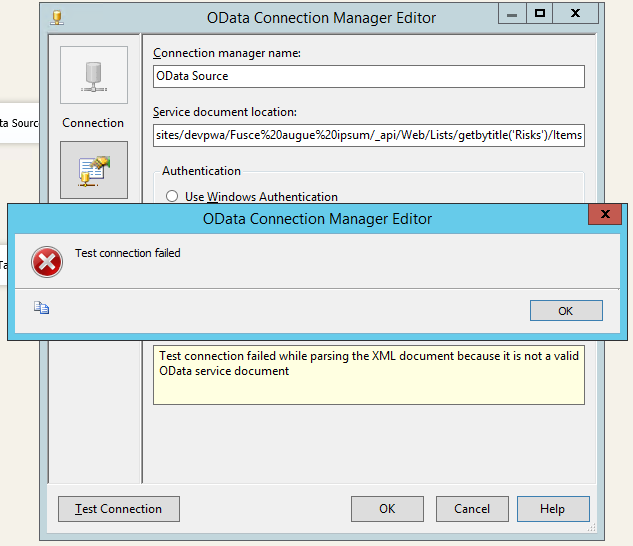

Also you’ll notice there in step 3 I use the listdata.svc endpoint rather than the REST endpoint (e.g. /_api/Web/Lists/getbytitle(‘Risks’)/Items), if you followed Paul’s steps above and tried to retrieve the site list data then you probably are seeing the following error when building the connection:

Error message:

Test connection failed while parsing the XML document because it is not a valid OData service document.

If you’re seeing that message it is because the SSIS connector is expecting a ‘service document’ and not the actual OData feed. Not sure why the SharePoint REST endpoints don’t also have a service document at the root but we can work around it by using the listdata.svc.

Finally once we have the list of all of the Project site URLs then we need to update the Data Connection URL before we retrieve the site list data. I’ll cover all of these steps below.

Requirements for building the SSIS package

Paul Mather’s blog covers the basic setup, so I won’t cover that in detail but in summary to create / use the solution below you will need:

- SQL Server Integration Services 2012

- SQL Data Tools 2012 (the BIDS replacement on the SQL DVD)

- Microsoft® OData Source for Microsoft SQL Server® 2012

- SharePoint Server 2013 Client Components SDK

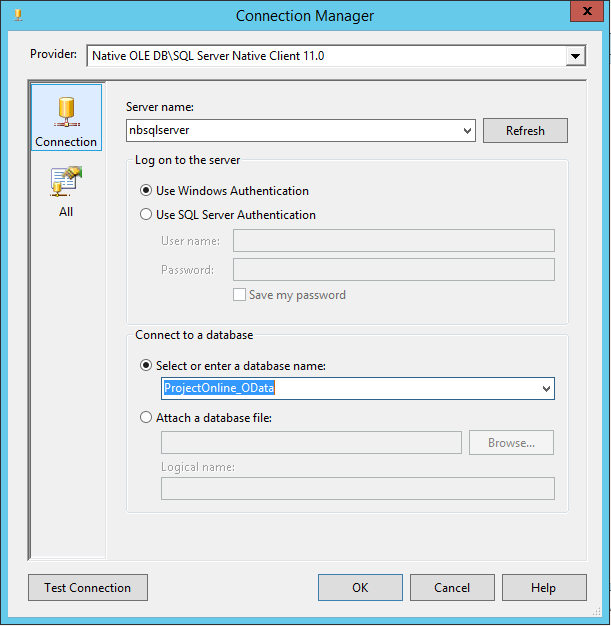

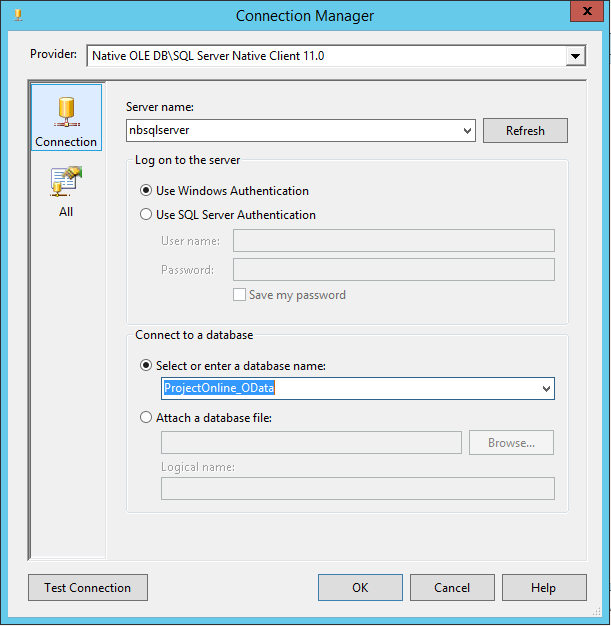

- An empty SQL database to write to, I’m going to use one called ProjectOnline_OData

You can run Data Tools on your client but the rest must be installed on the SSIS server.

Creating a SSIS package to retrieve site custom list for all projects

Create a new SSIS package and to start by adding the following Connection Managers:

- OLE DB Connection which points to your SQL database: e.g. ProjectOnline_OData

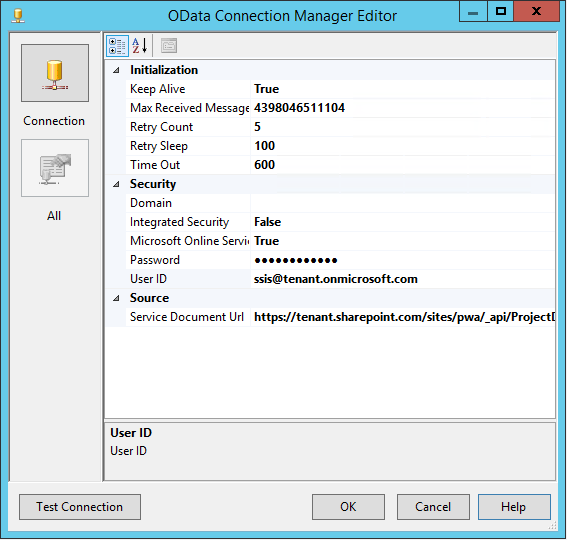

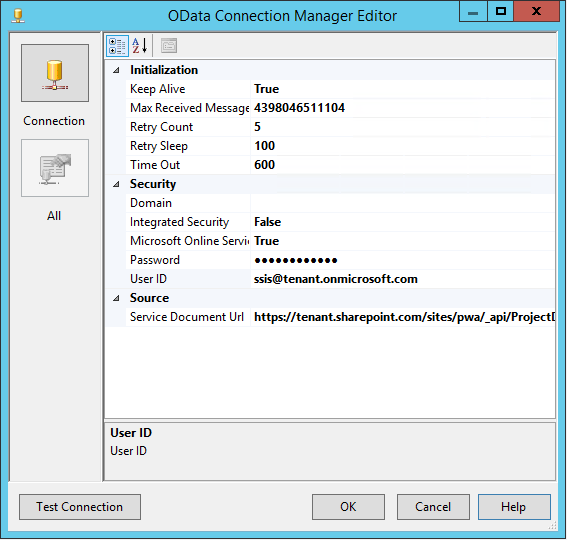

- An OData Connection pointing to the ProjectData OData service: e.g.

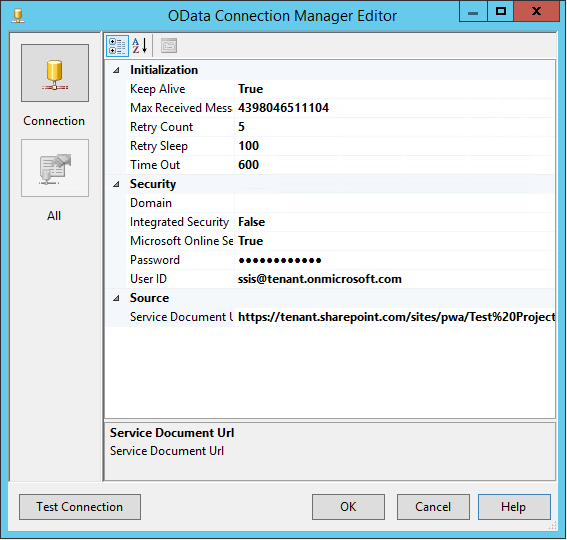

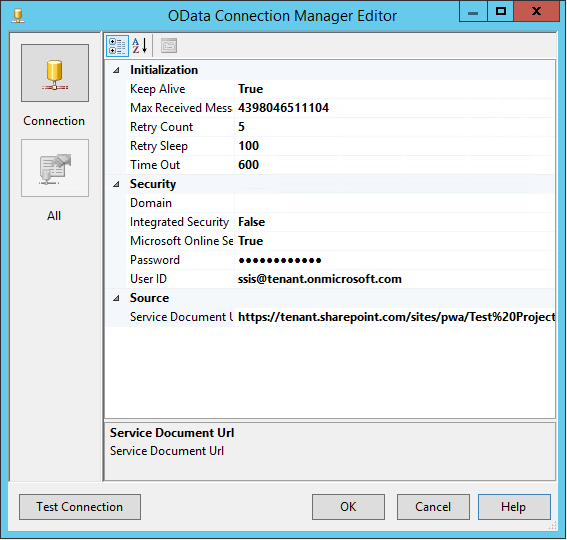

- An OData Connection pointing to the SharePoint ListData.svc OData service of an existing project site: e.g. Test%20Project/_vti_bin/listdata.svc

Make sure that each connection test’s successfully, and give them descriptive names which will be used later. I’m using OData_ProjectData and OData_SharePointListData for the last two and note that both names will be referenced below.

A note on security

The account used to authenticate with our OData connections must be a ProjectOnline user, and specifically it must have access to all projects and project sites. In order to do this easily I have added the users ([email protected]) to the following:

- Project Web App Administrators

- Site Collection Administrators

Adjust as you see fit.

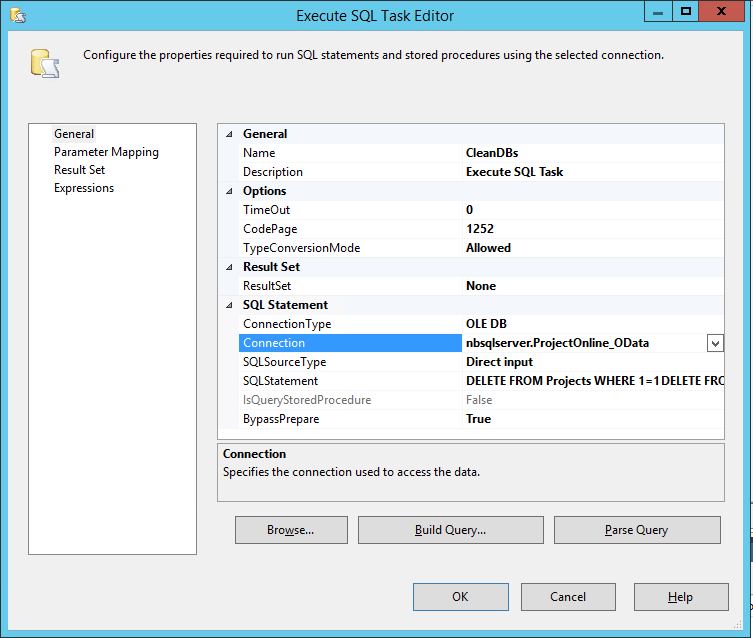

Retrieve project data from OData

Now that we have our data connections we need to first get our Project data (and optionally any other data such as tasks), then prepare to get our project site data.

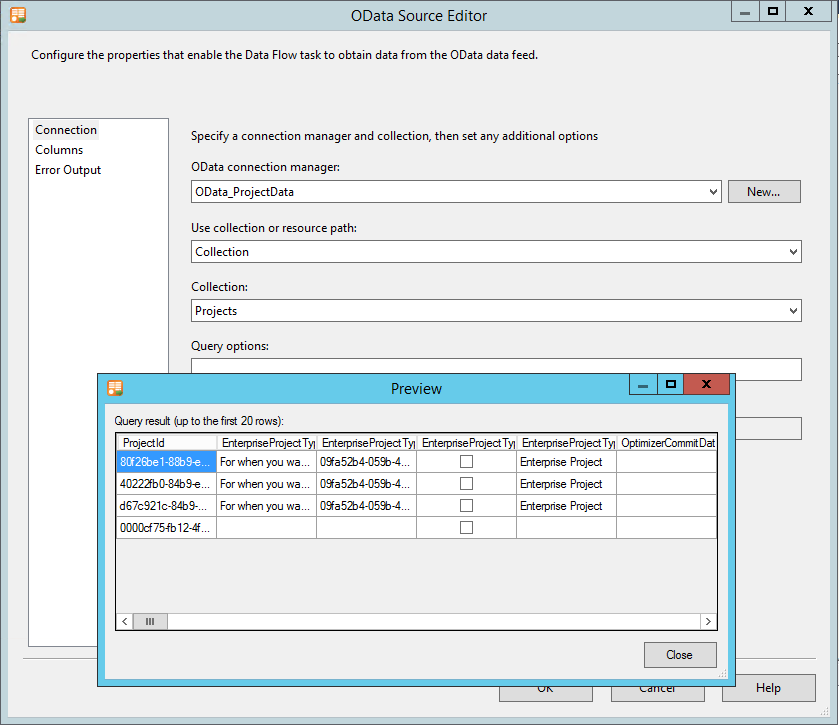

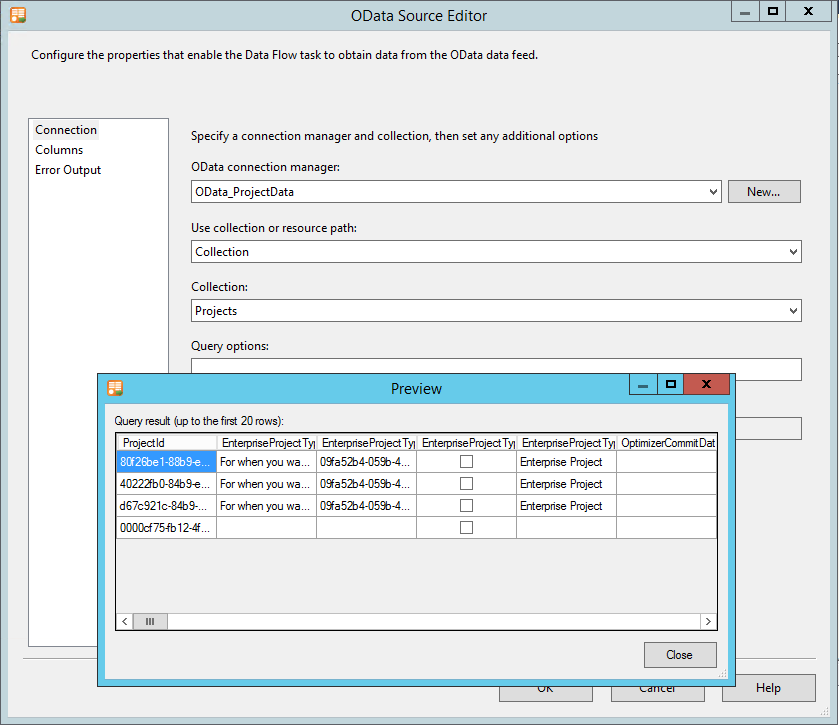

- As per Paul’s blog create a Data Flow task with an OData Source (OData_ProjectData) which points to the Projects collection.

- Ensure that you select only your required Columns, however you must include the following as a minimum: ProjectId & ProjectWorkspaceInternalUrl.

Projects collection OData source with preview

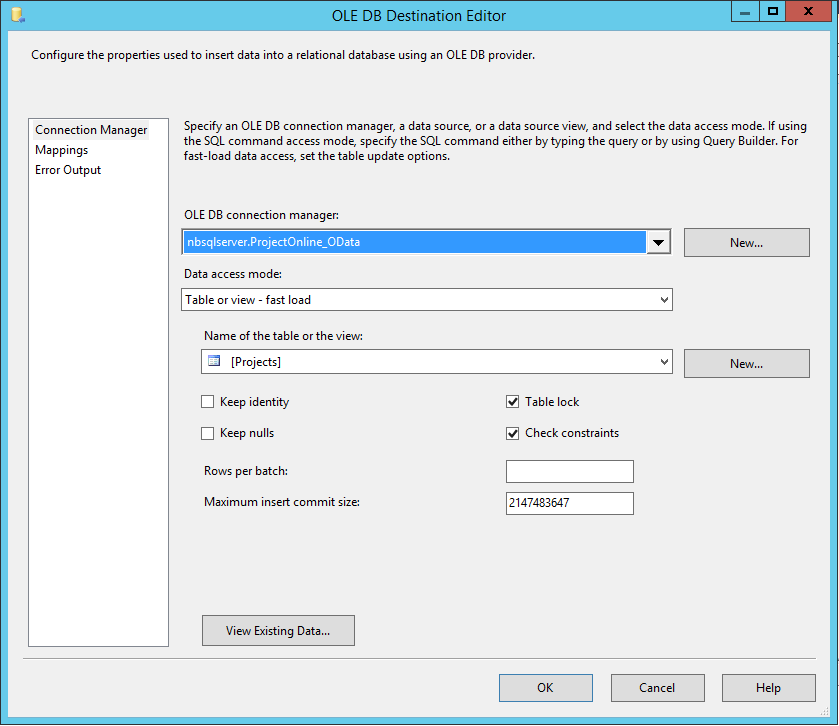

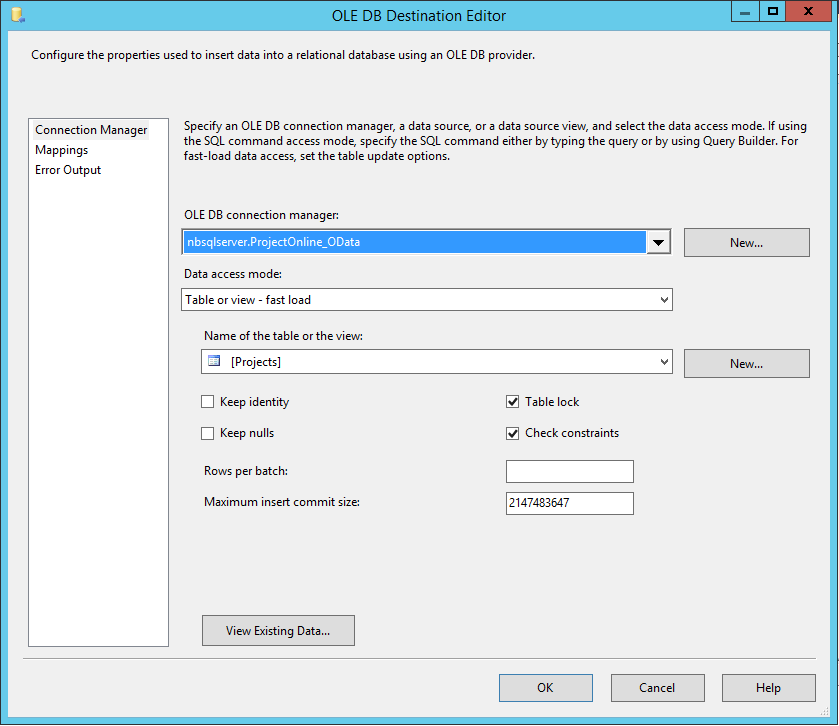

- Use the Destination Assistant to create the destination database

- Create a new table to store the data in (I’m using the name Projects) and configure your mappings

- Optionally you could add other Data Flow tasks in there to get Tasks or other data into additional database tables, but only using the OData_ProjectData connection at this point.

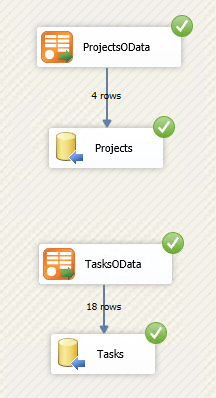

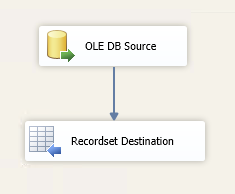

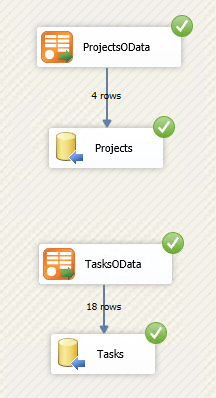

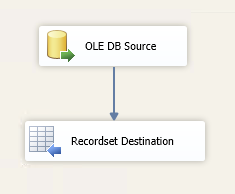

So now you should have a solution that runs and looks something like this when debugging:

Also if you started with Paul’s blog, then this is where those steps end.

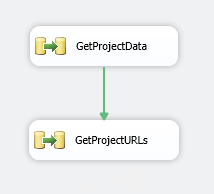

Prepare to loop through all project sites

In order to get the site list data for each project in PWA we need to first save a temporary variable with a list of all ProjectWorkspaceInternalUrl‘s.

- In your solution on the Control Flow tab add another Data Flow Task. Link the green arrow from the previous task to this one (so runs 2nd)

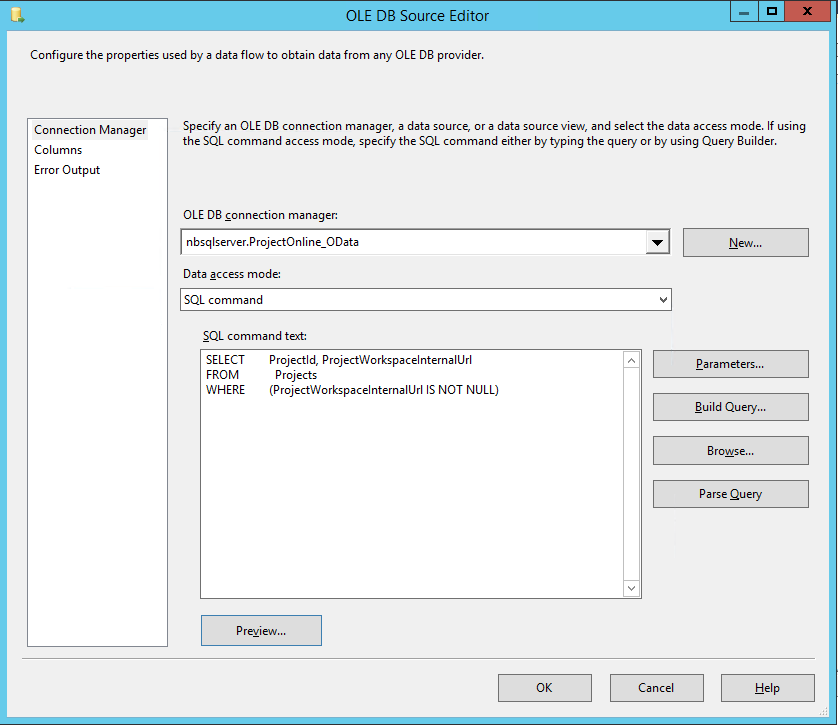

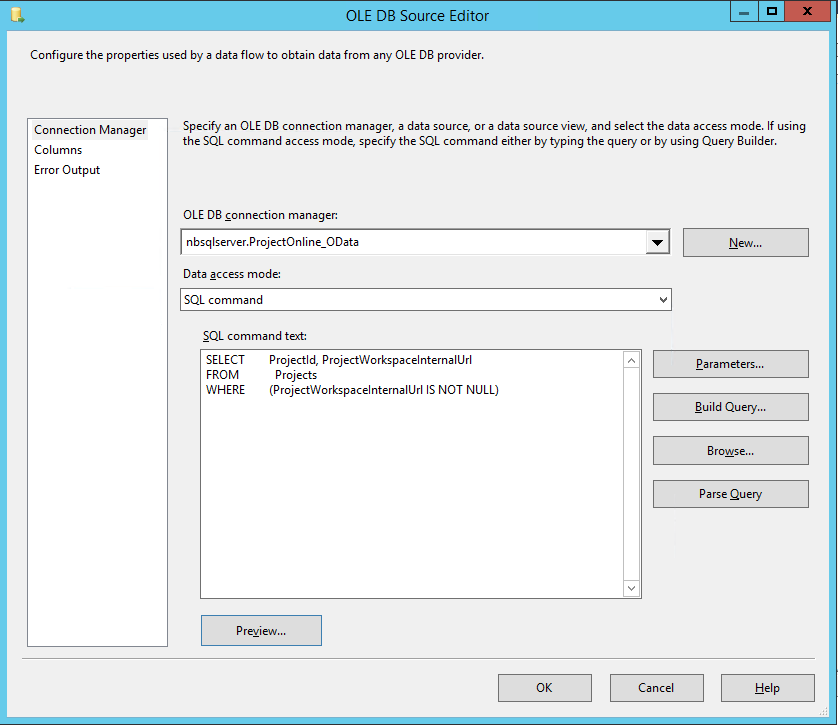

- Add an OLE DB Source to the Data Flow using the Source Assistant

- This source will be configured to get project url’s saved into our database in the last step using the following SQL command:

SELECT ProjectId, ProjectWorkspaceInternalUrl

FROM Projects

WHERE (ProjectWorkspaceInternalUrl IS NOT NULL)

So it looks like;

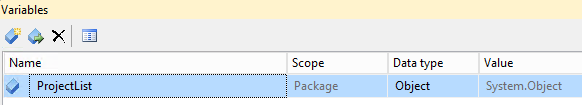

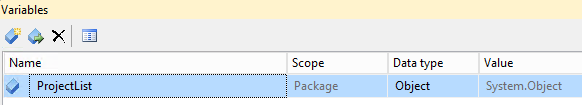

Create a temporary variable to store URLs

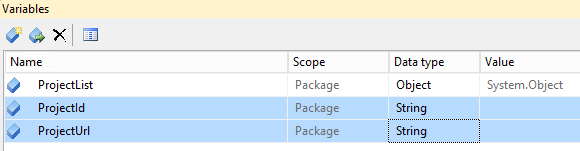

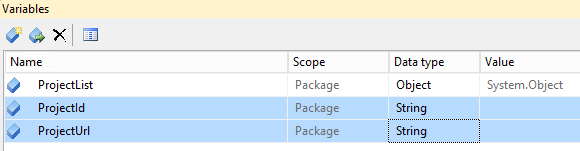

- Open the Variables window in Visual Studio (it’s one of the icons top right above the package area.

- Add a variable called ProjectList and set the Type to Object

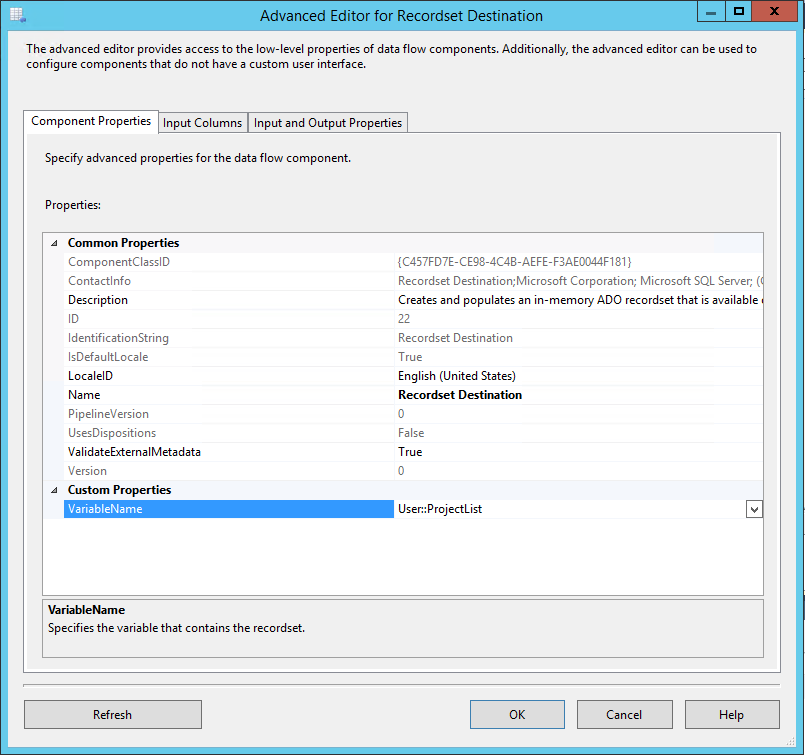

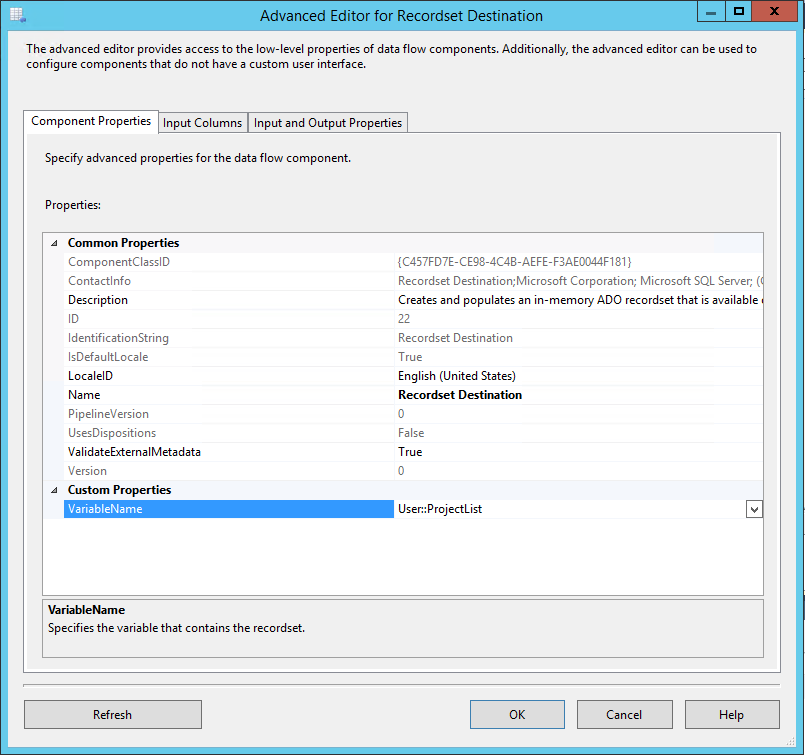

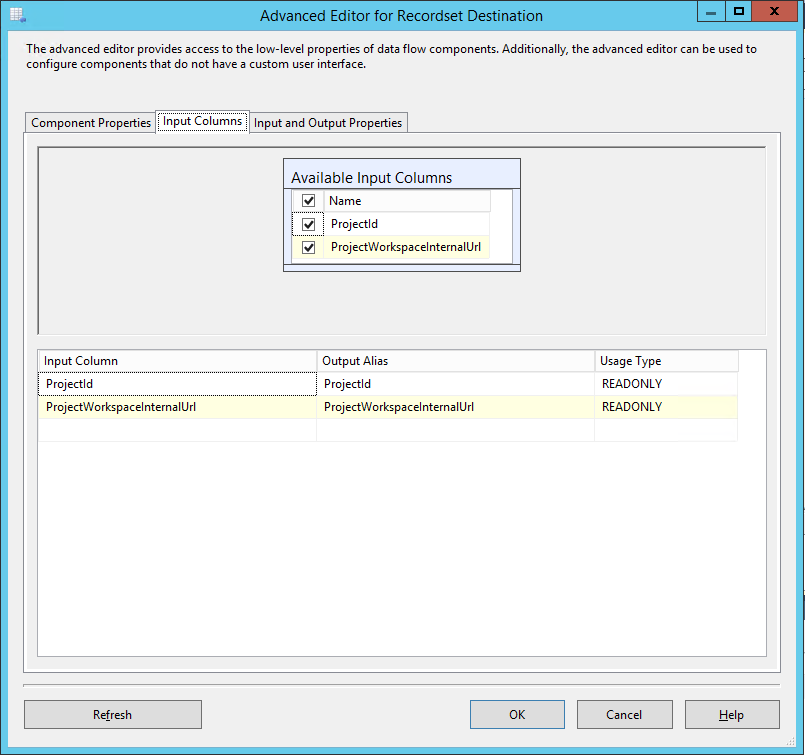

- Now add a Recordset Destination to the data flow and link the blue arrow from our OLE Source to it

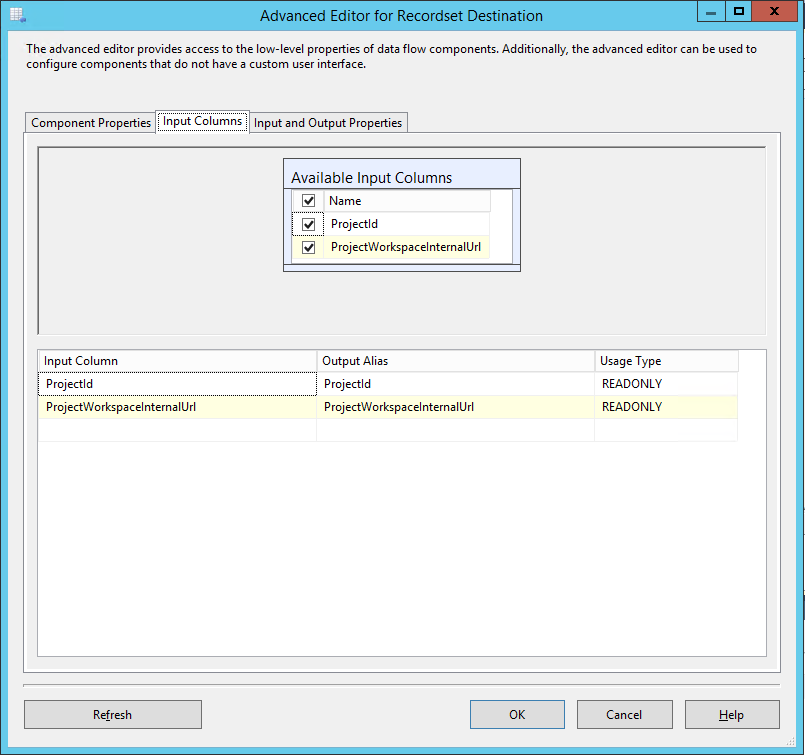

- In the advanced properties specify the VariableName just created.

- On the Input Columns tab select all columns

All other settings can be left with defaults. The Data Flow task should now look like this;

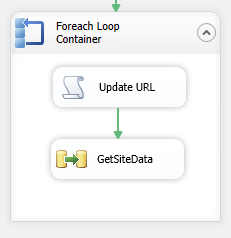

Create a Foreach loop to iterate through sites

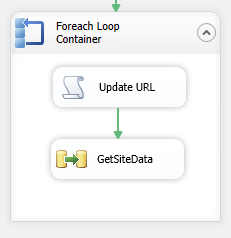

Now we’re getting to the tricky part, SSIS enables this kind of iteration nicely, however we need to do a couple of special things to work with our OData list data, specifically update the datasource URL to the next project and extend the returned data by adding the ProjectId field of the project (for our FK).

- Firstly add two new Variables to the package; ProjectId and ProjectUrl, both as type String

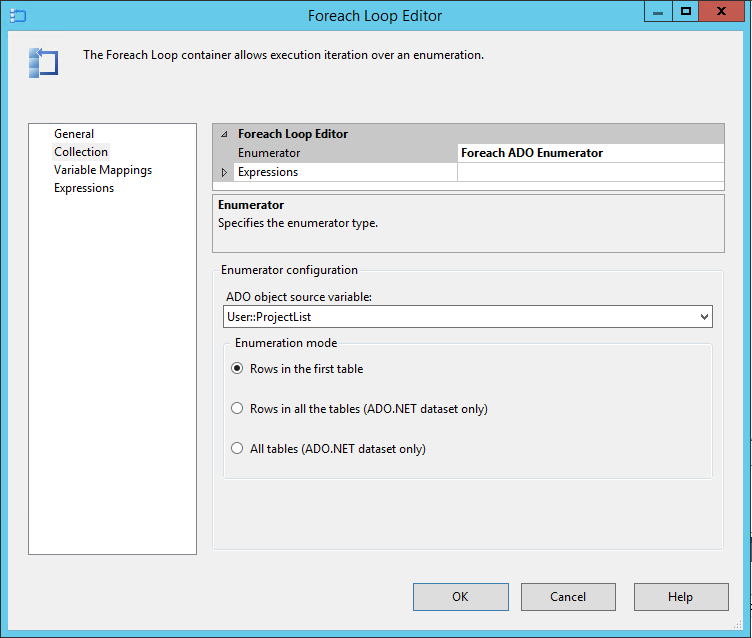

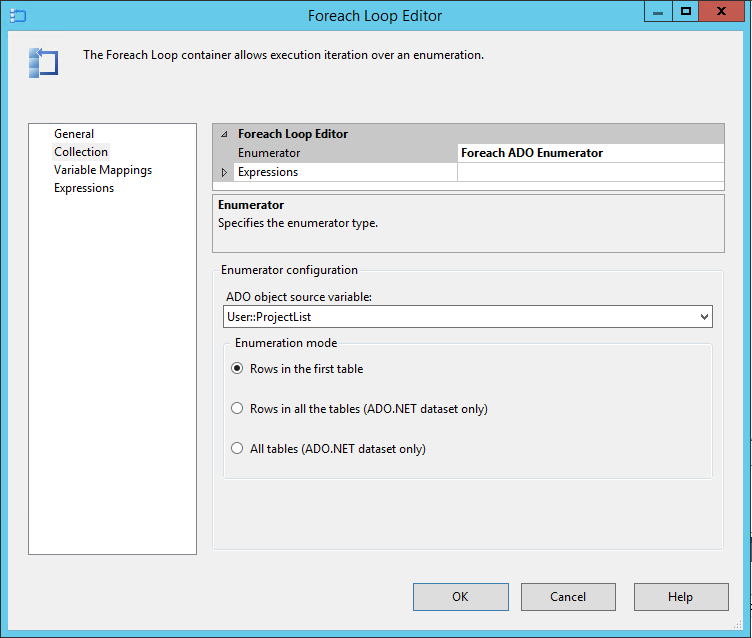

- Next drag in a Foreach Loop Container and connect the green arrow from the previous item to it

- In the Collection properties of the Foreach Loop select Foreach ADO Enumerator and specify the User::ProjectList variable created earlier

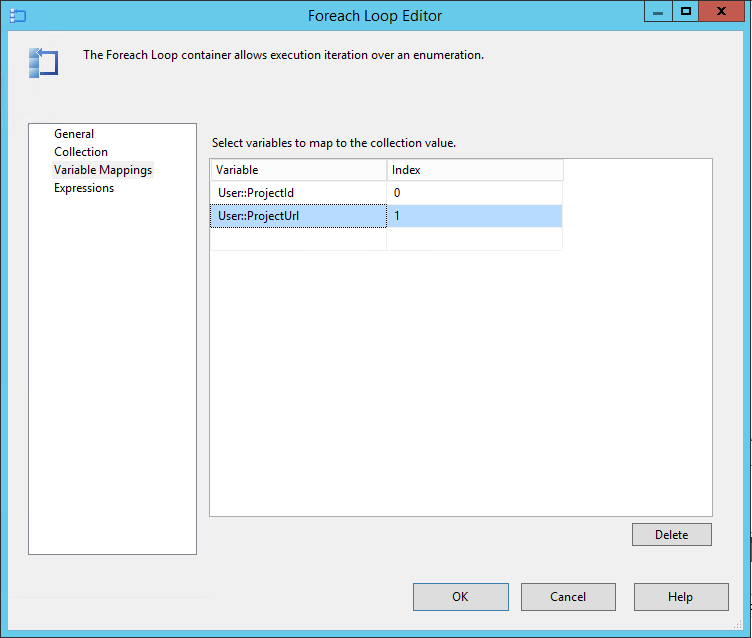

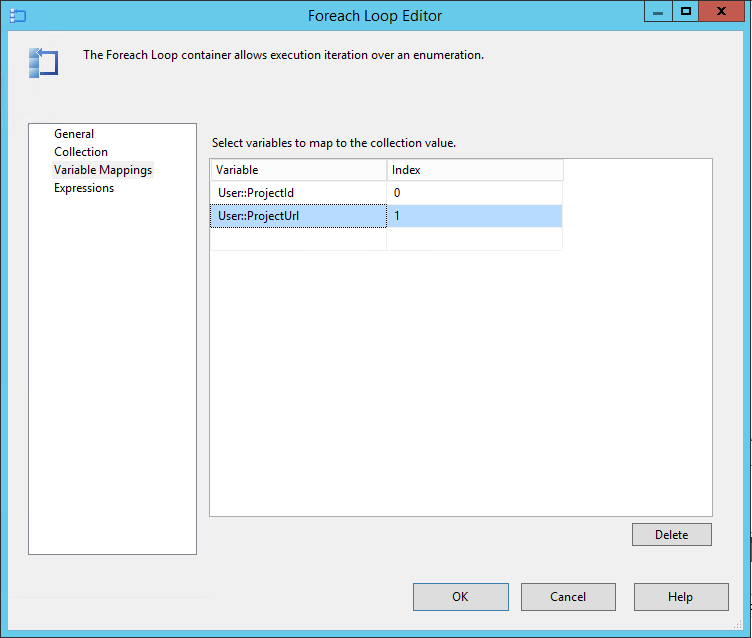

- Next under Variable Mappings specify our other variables in the following index order: Index 0 – User:ProjectId, Index 1 – User::ProjectURL, (Note this order is important and used in the Foreach below!)

Update the SharePoint Listdata datasource URL

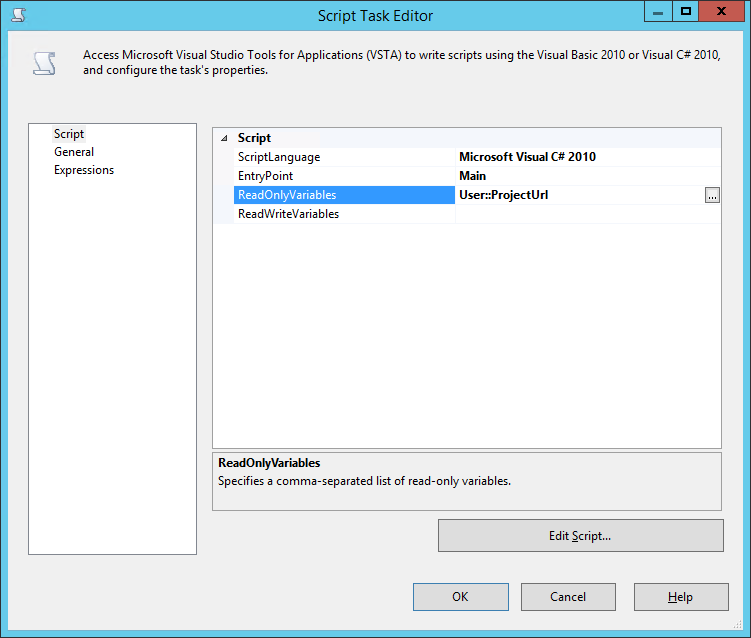

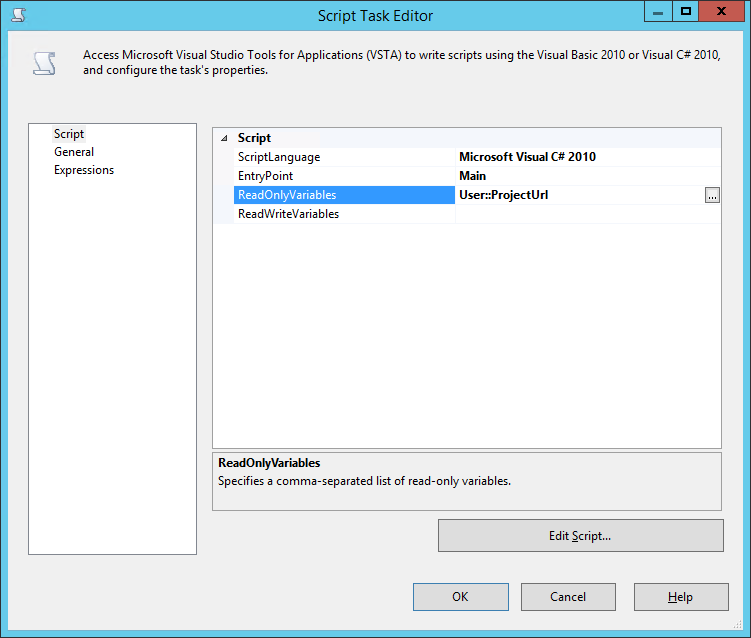

The first action inside our Foreach loop must be to update the connection string property of our SharePoint listdata dataconnection created at the beginning. Don’t run away but we’ll do this using a Script Task and a few lines of code.

- Drop a Script Task inside the Foreach loop container

- In the properties make sure the ScriptLanguage is Visual C# 2010

- Then add a ReadOnlyVariable pointing to User::ProjectUrl

- Click Edit Script and we’ll be replacing the Main() block with the following code:

public void Main()

{

// TODO: Add your code here

String ProjectURL = (String)Dts.Variables[0].Value;

Dts.Connections["OData_SharePointListData"].ConnectionString =

System.Text.RegularExpressions.Regex.Replace(

Dts.Connections["OData_SharePointListData"].ConnectionString,

"https://.*(?=/_vti_bin/listdata.svc)",

ProjectURL);

Dts.TaskResult = (int)ScriptResults.Success;

}

IMPORTANT NOTE: In that code we reference the OData_SharePointListData connection by name which we created way back in the first step! So if you used another name make sure you update both references to that name in the code.

Save and close that script window and we can move on to the last couple of steps.

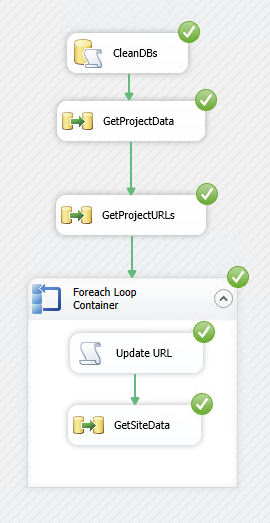

Getting project site data from each site

- Add a Data Flow Task after the script task inside the Foreach loop container, and link the green arrow from the script task to the data flow task

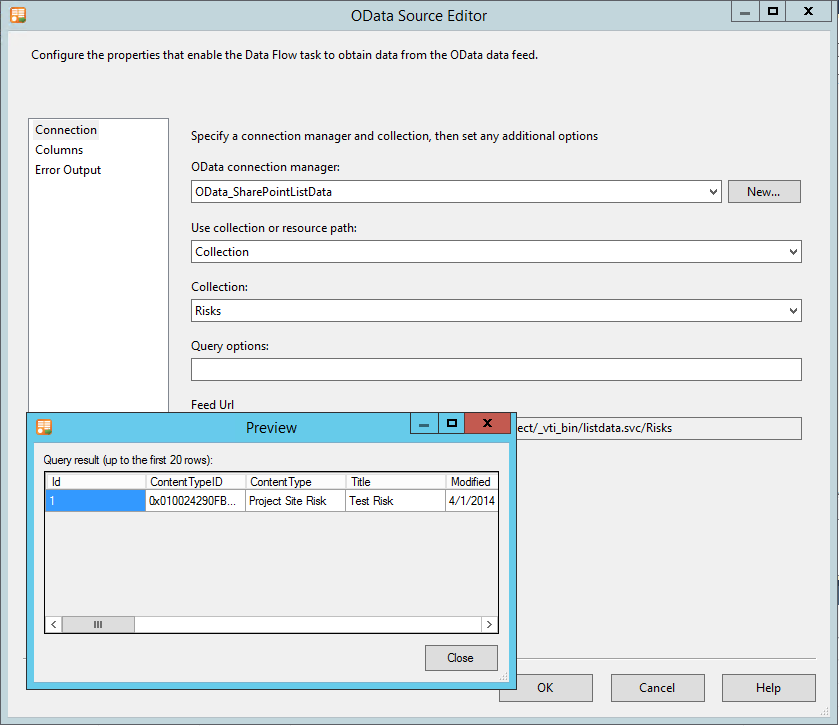

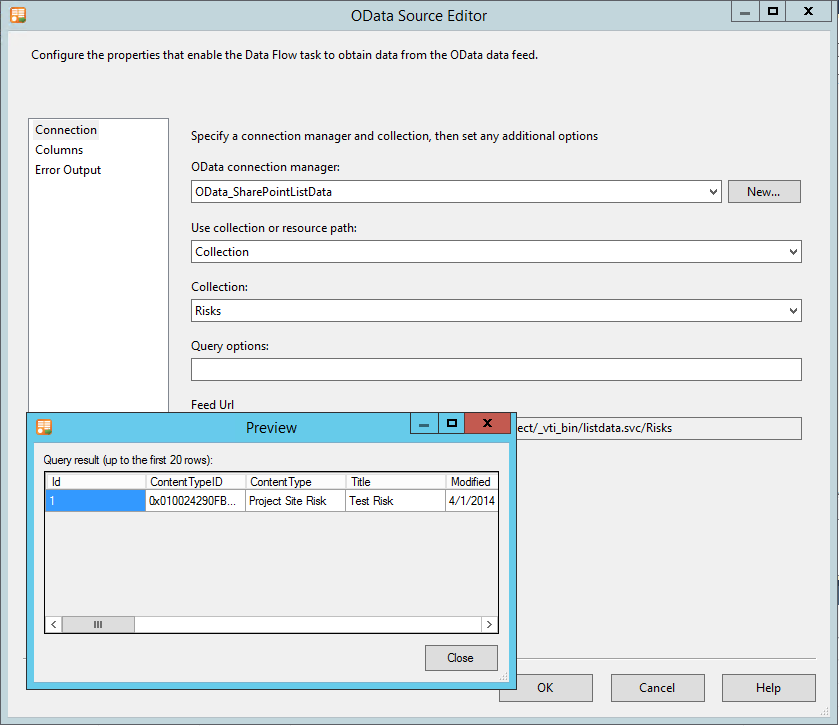

- In the Data Flow tab for this item, first add an OData Source

- Configure the source using the OData_SharePointListData source created and specify the required collection (I’m using Risks for the sake of this demo)

- Select the desired columns and preview to test

Note here that I’m able to see the values in the test project site that I specified right back at the beginning, however when the solution executes the URL will have been updated before this step. Whenever you edit this solution in the future this site must be valid and accessible else you will get errors here.

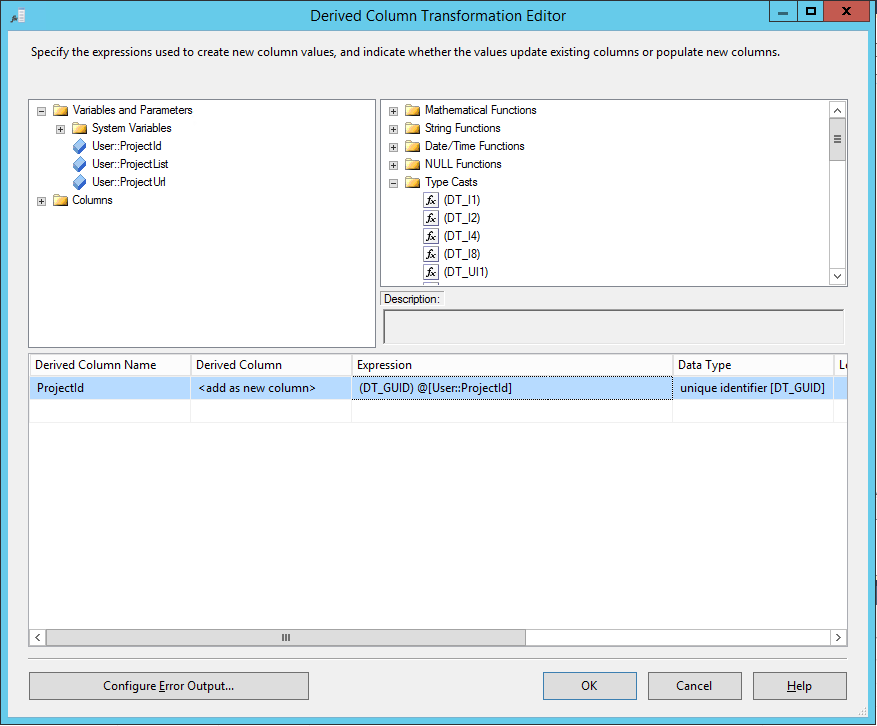

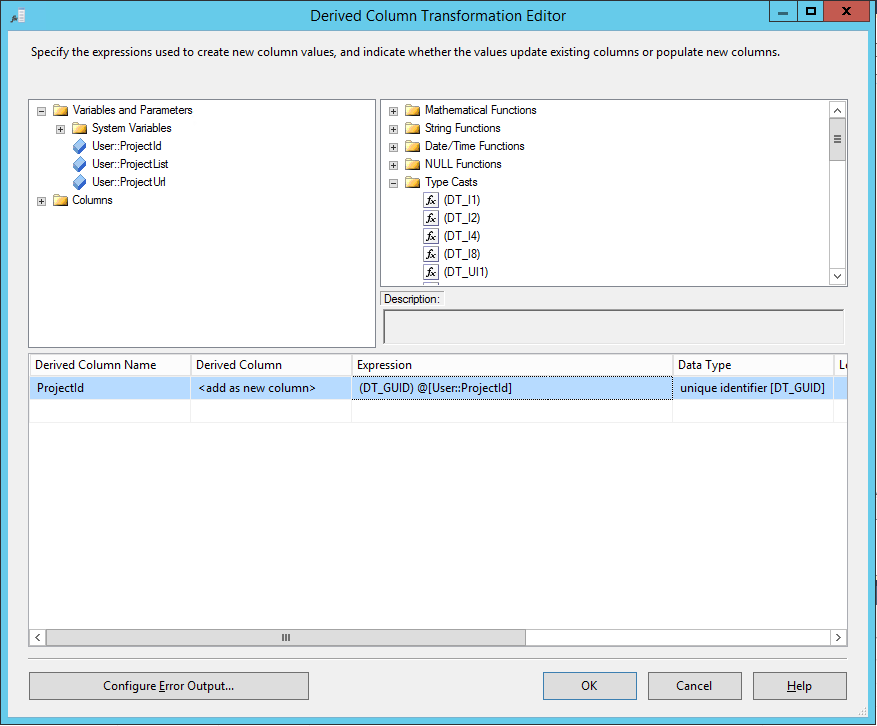

Next we need to add our project server ProjectId to the data coming from OData so we can use it is a foreign key in the destination database table.

- Drag a Derived Column to the canvas link it 2nd and edit the properties

- Add a Derived Column Name as ProjectId

- From the Variables and Parameters drag the User::ProjectId into the Expression box

- Lastly (importantly) from the Type Casts list drag the (DT_GUID) item to the start of the expression so it is exactly: (DT_GUID) @[User::ProjectId]

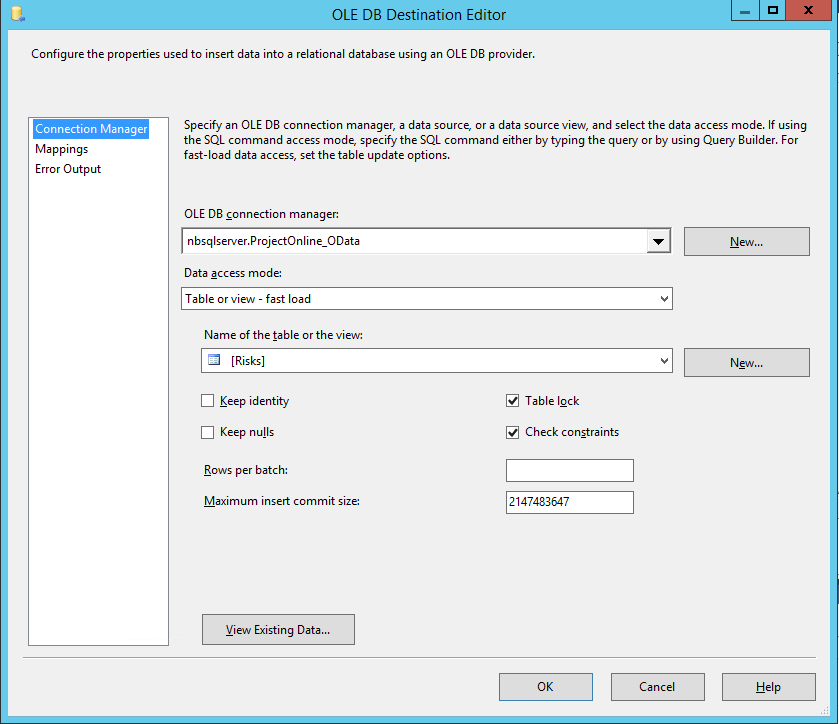

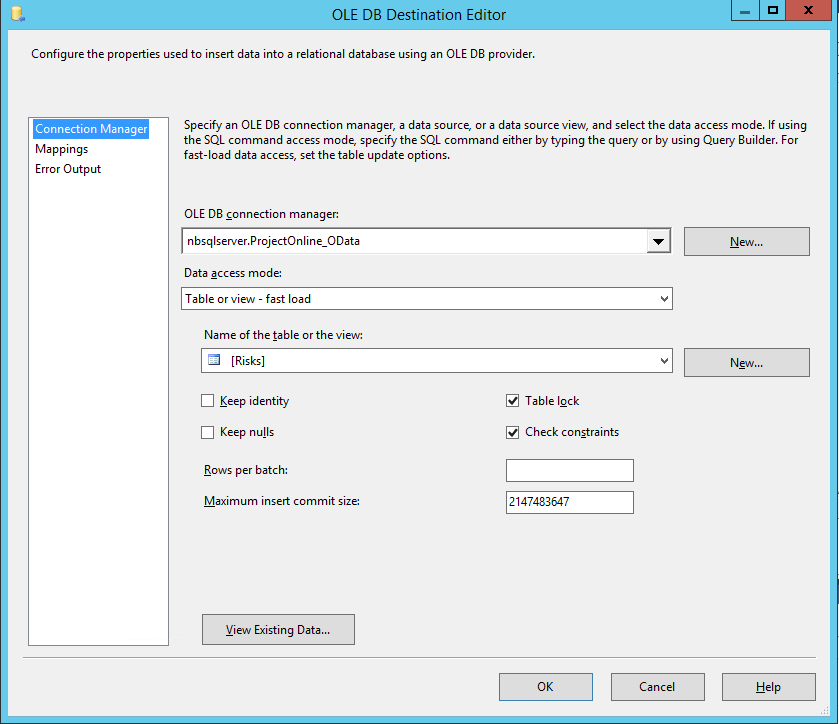

- Now finally use the Destination Assistant to create and configure our Risks database table where the data will be saved

- Configure the mappings as required, and double check that ProjectId has automatically been mapped

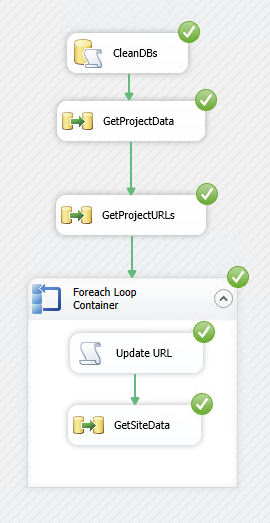

We’re just about done, that Data Flow should now look like this:

Final touches

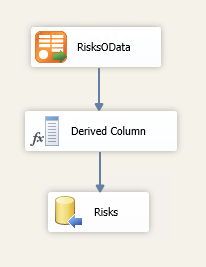

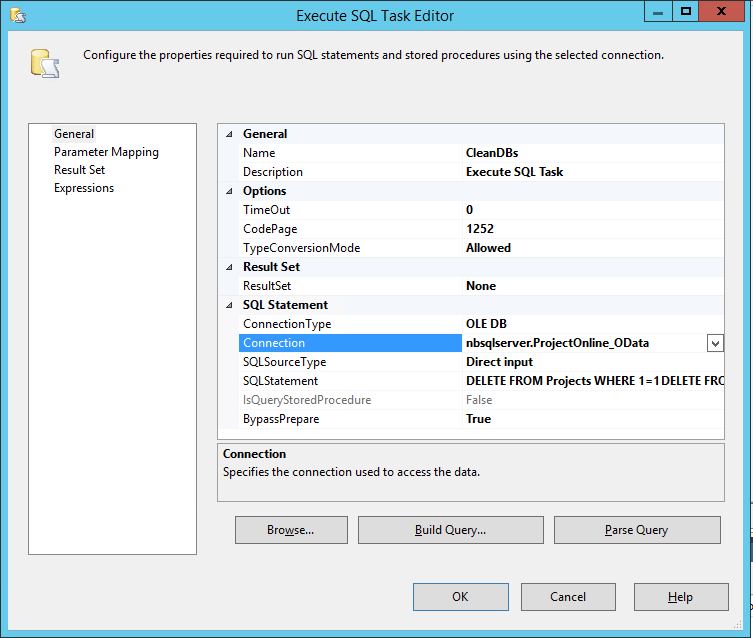

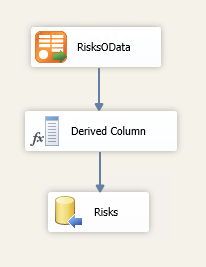

The solution should now run and work as expected, with only one catch, each time you run it it will append all of the data again, so for a quick (hackish) solution to that the final step here is to add a step to the very beginning to delete existing data in our report database.

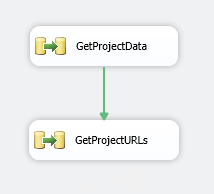

- On the Control Flow tab add one more Execute SQL Task to the top of the canvas and make our first GetProjectData follow it.

- Edit the properties and select our temp database ProjectOnline_OData as the Connection

- Then set the following as the SQL Query (Note: Don’t use Build Query as that won’t let you cut and paste, just paste it into the SQLStatement field

DELETE FROM Projects WHERE 1=1

DELETE FROM Tasks WHERE 1=1

DELETE FROM Risks WHERE 1=1

- No other options need be changed

Done, test it out and it should run and look something like this when run:

Final words

That was easy, wasn’t it? Aren’t we all glad to not need the SSRS SharePoint list datasource anymore! *ahem* [sarcasm]

Well now that you have your data-warehouse of project data and all artifacts, that should make your SSRS report writing not only simpler but actually much faster performing.

Download Source

Download this full solution here and any post any questions below.

ProjectOnline_OData.zip

To use this you will need to update all your data-connections and recreate the destination Tables (as per steps above).

by Martin Laukkanen | Mar 31, 2011 | Reporting, SQL

A long time ago (it seems) I blogged about reporting directly from the SharePoint workspace sites using SQL Reporting Services to get around the limitations with the reporting database refresh. Using XML provided a neat method for pulling data directly from all the out-of-the-box lists such as Risks and Issues, as well as other custom lists.

Unfortunately setup and maintenance was difficult requiring a little knowledge of web services as well as some XML syntax, but fortunately now with SQL Server 2008 R2 things have improved drastically!

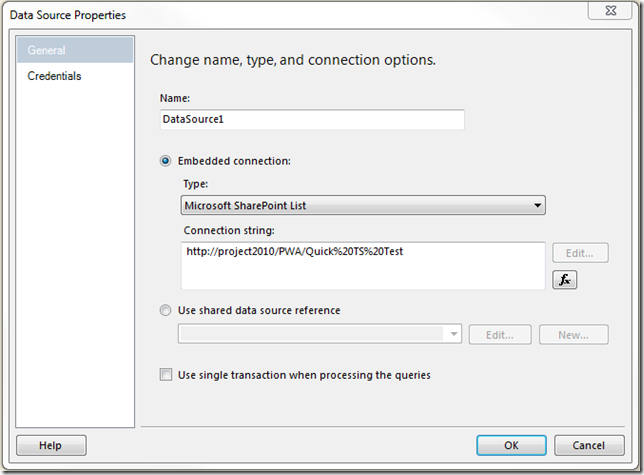

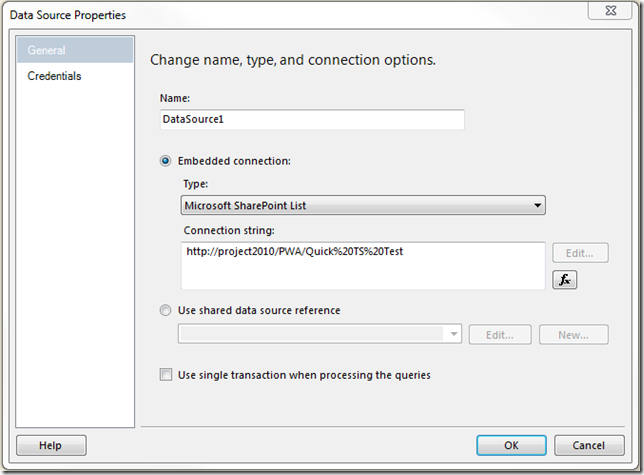

What’s New: Now you can directly create ‘Microsoft SharePoint List’ data sources!

See the screenshot:

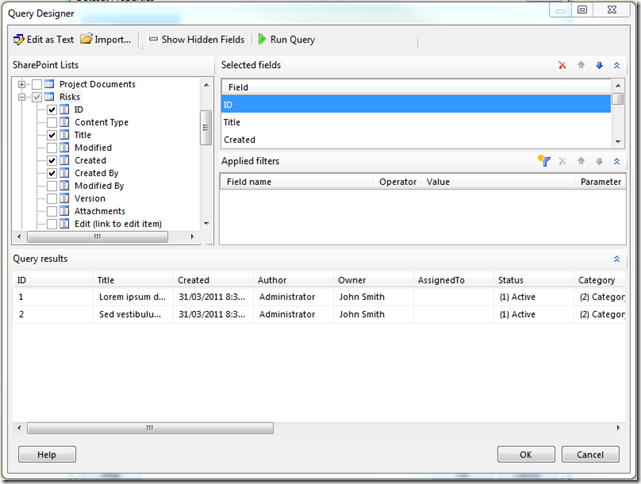

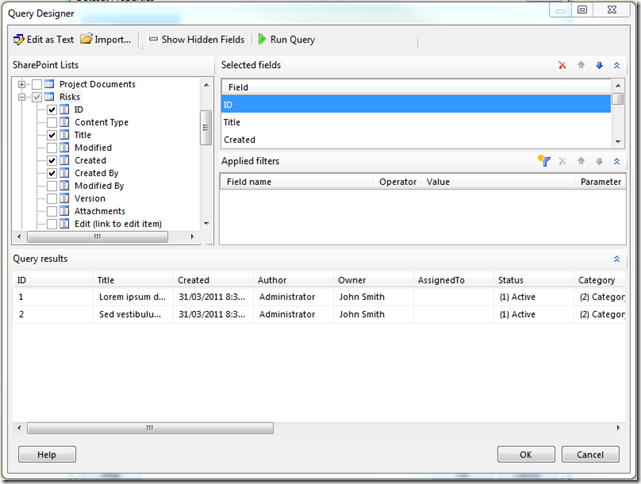

Better yet when you create a Data Set using the above data source the Query Designer is completely new:

Finally no more messing around with column names using XML and having to have specific views configured in your SharePoint sites.

That should make things much easier!

BTW. Lastly it’s definitely worth mentioning that the above also works with Report Builder 3.0!